Claude API, Straight from the Source

{Change One Line, Start Calling}

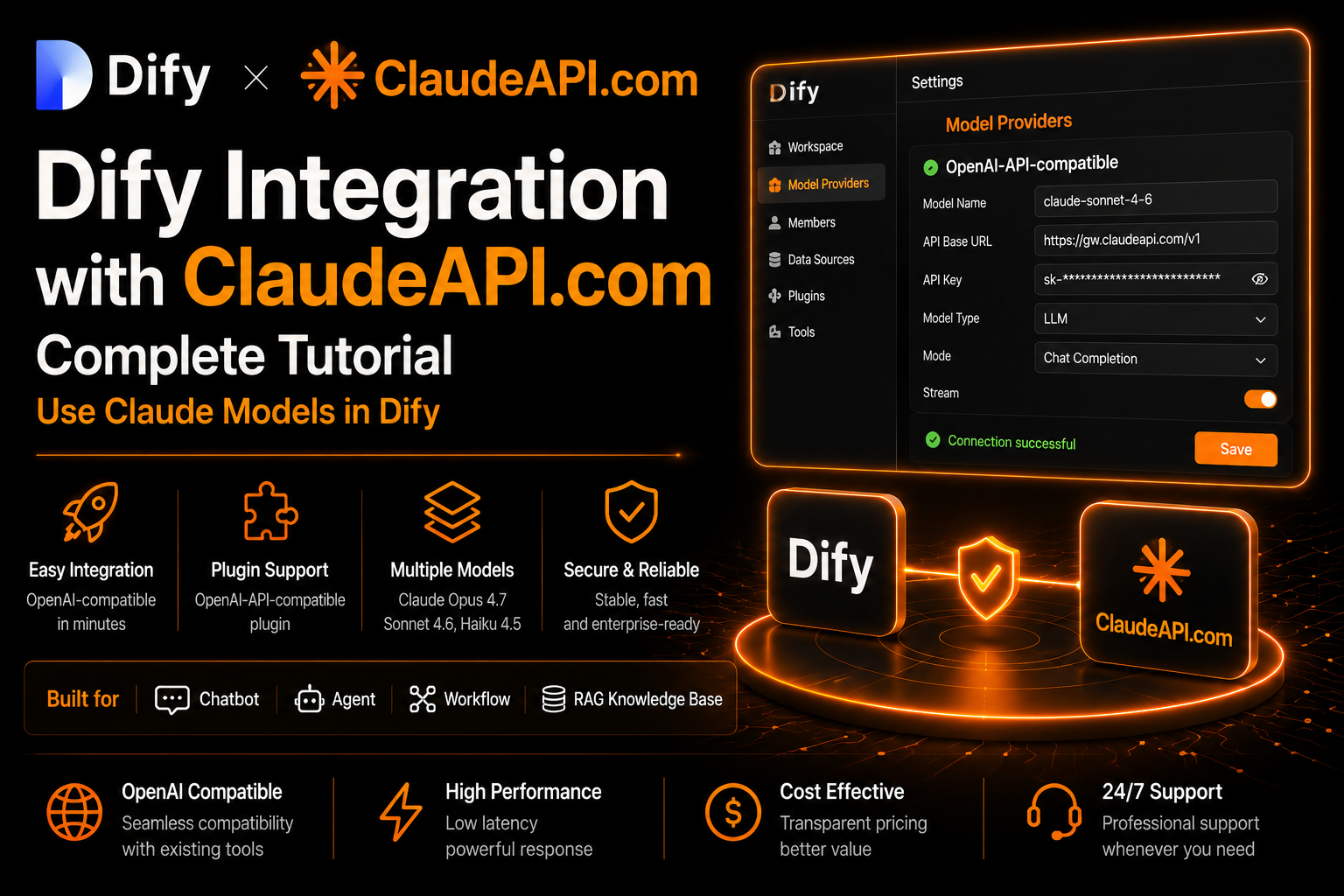

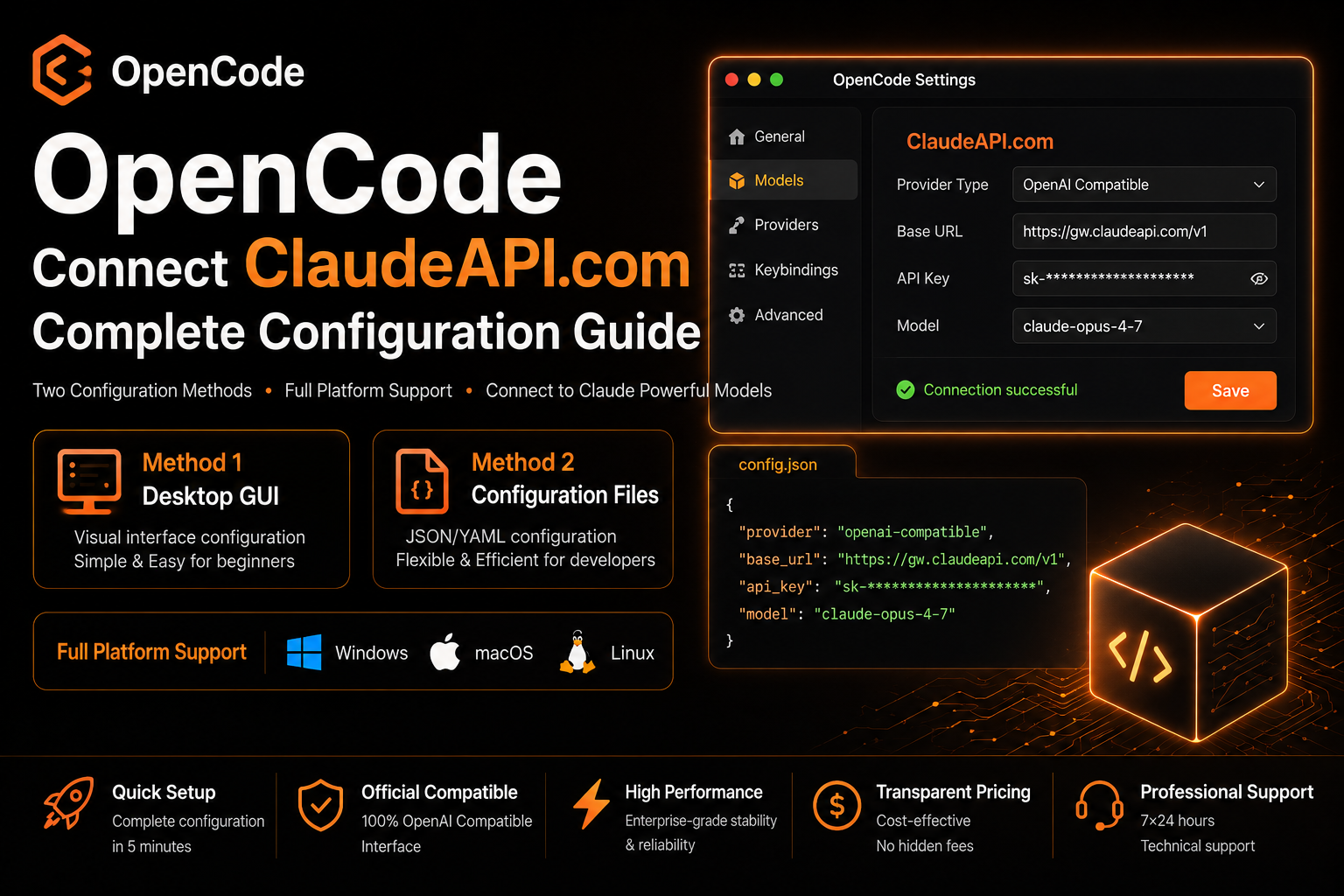

Claude-compatible API gateway for developers using Claude Code, Cursor, Cline, and AI agents.

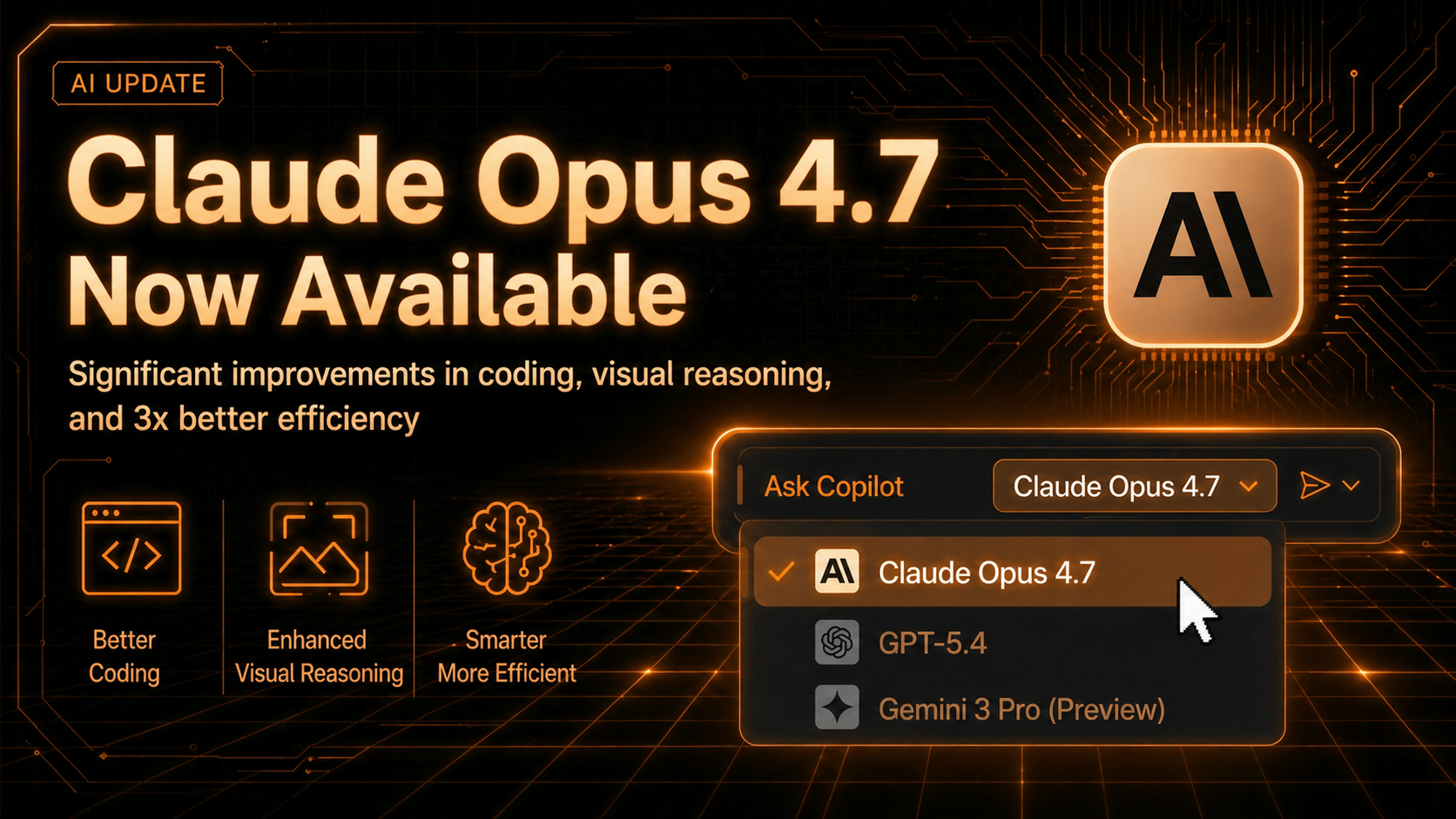

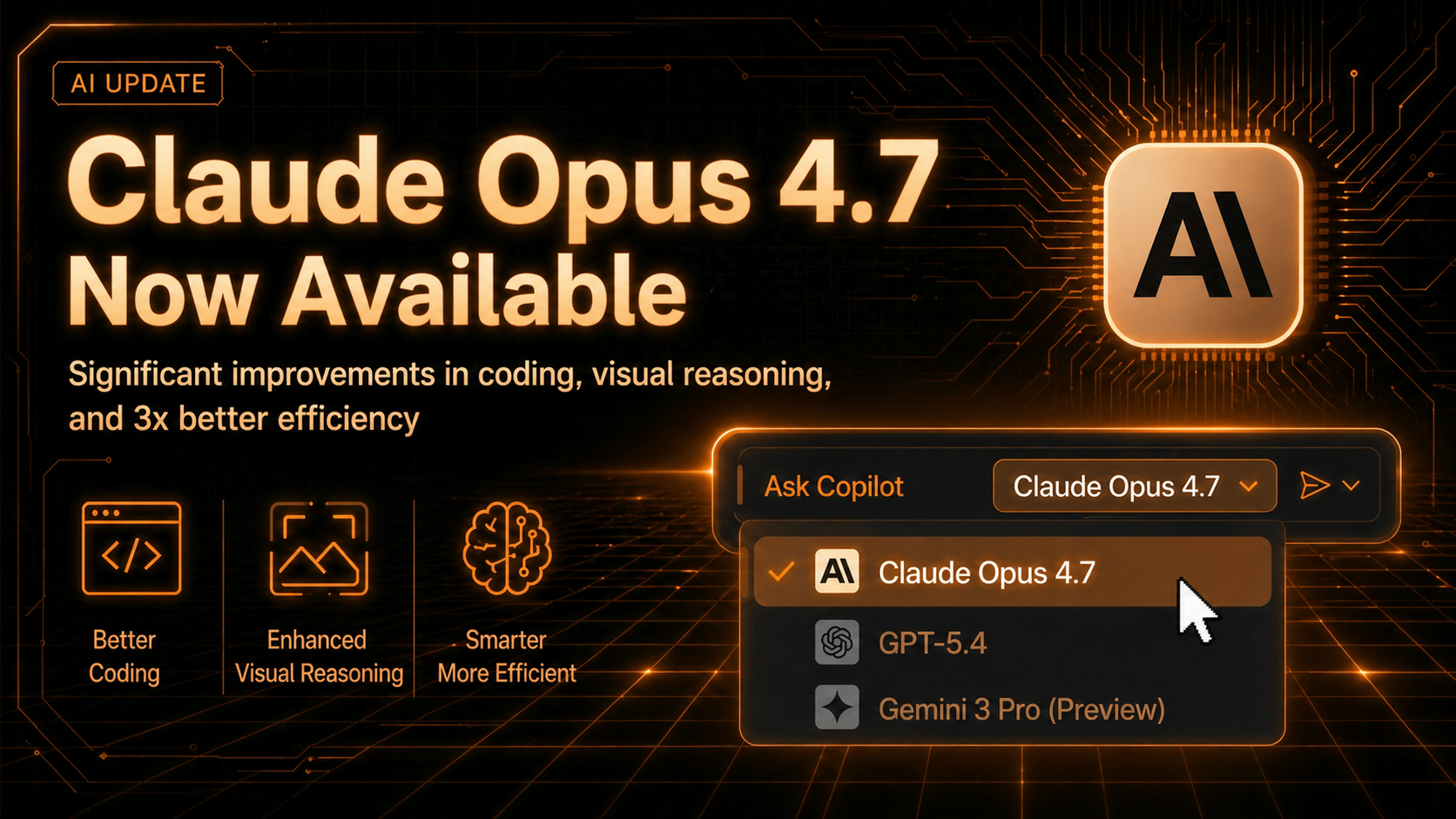

Claude-only focus — day-one model updates, industry-lowest latency

import anthropic

client = anthropic.Anthropic(

api_key="your-api-key",

base_url="https://gw.claudeapi.com"

)

message = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "user",

"content": "你好,Claude!"}

]

)

print(message.content[0].text)import anthropic

client = anthropic.Anthropic(

api_key="your-api-key",

base_url="https://gw.claudeapi.com"

)

message = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "user",

"content": "你好,Claude!"}

]

)

print(message.content[0].text)curl https://gw.claudeapi.com/v1/messages \

-H "x-api-key: $API_KEY" \

-H "content-type: application/json" \

-d '{

"model": "claude-opus-4-7",

"max_tokens": 1024,

"messages": [

{"role":"user","content":"你好"}

]

}'curl https://gw.claudeapi.com/v1/messages \

-H "x-api-key: $API_KEY" \

-H "content-type: application/json" \

-d '{

"model": "claude-opus-4-7",

"max_tokens": 1024,

"messages": [

{"role":"user","content":"你好"}

]

}'Using Claude API Shouldn't Be This Hard.

Rate limits, unpredictable access, rigid billing — Anthropic doesn't solve these for you. We do.

Rate Limits & Access Restrictions

waitlists, rate limits, suspensions

Unstable connectivity, request timeouts

Frequent timeouts, 529 overload errors,inconsistent latency,Not something you can bet your product on.

Payment Friction

prepaid only, no invoicing

One API Key,

UnlockAll Claude Models

We're not another 'all-in-one' AI gateway.Everything we build and optimize is centered on Claude, so you get fast access to the latest releases and deeper performance tuning for Claude-based workloads.

Global Low-Latency Access

Multi-region deployment with intelligent routing with reliable access and no extra proxy setup required.

100% Anthropic SDK Compatible

Just swap the base_url — zero code changes, migrate in seconds.

Pay-as-you-go

USD Billing

Only pay for what you use. We accept credit cards. Need invoices for your team? No problem — enterprise billing available.

Dedicated Developer Support

No tickets. No bots.Talk directly to an engineer who can actually solve your problem — via Whatsapp or Telegram.

Swap the base_url. Zero Code Changes.

Compatible with both Anthropic and OpenAI API formats. No refactor required — just point your existing integration to our endpoint.

import anthropic

client = anthropic.Anthropic(

api_key="sk-ant-...",

)

with client.messages.stream(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "user", "content": "写一首短诗"}

]

) as stream:

for text in stream.text_stream:

print(text, end="", flush=True)import anthropic

client = anthropic.Anthropic(

api_key="sk-ant-...",

)

with client.messages.stream(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "user", "content": "写一首短诗"}

]

) as stream:

for text in stream.text_stream:

print(text, end="", flush=True)import anthropic

client = anthropic.Anthropic(

api_key="your-api-key",

base_url="https://gw.claudeapi.com"

)

with client.messages.stream(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "user", "content": "写一首短诗"}

]

) as stream:

for text in stream.text_stream:

print(text, end="", flush=True)import anthropic

client = anthropic.Anthropic(

api_key="your-api-key",

base_url="https://gw.claudeapi.com"

)

with client.messages.stream(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "user", "content": "写一首短诗"}

]

) as stream:

for text in stream.text_stream:

print(text, end="", flush=True)Pay-as-you-go, only pay for what you use

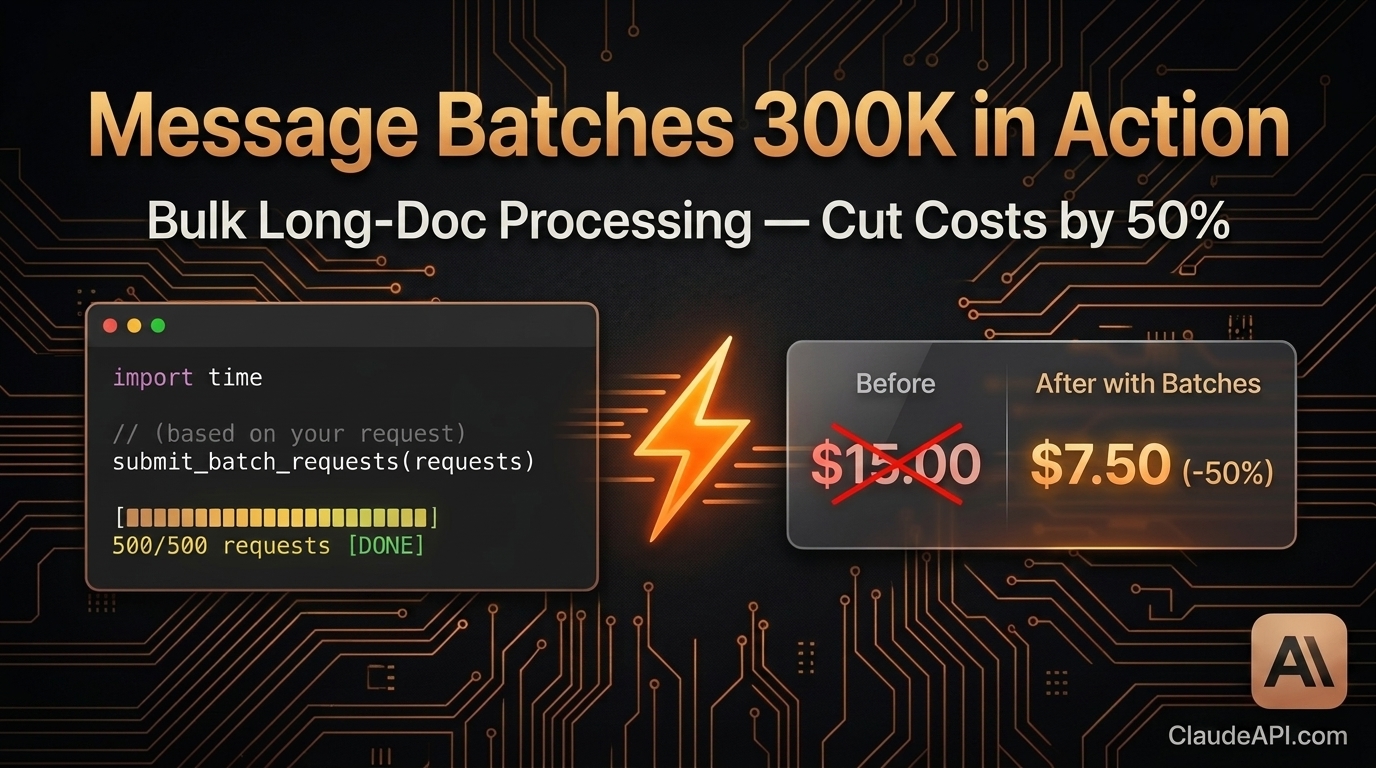

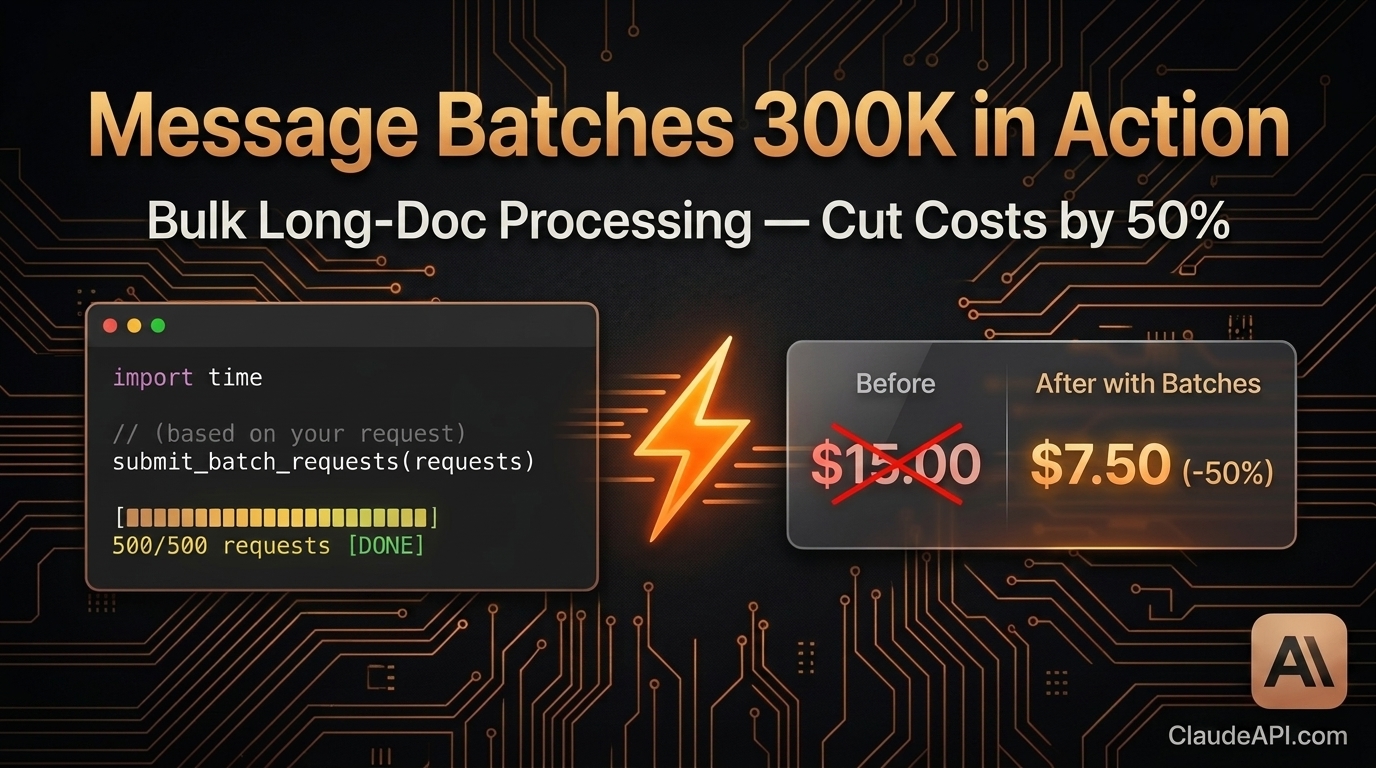

We offer an official 20% discount on pricing, update models in sync with the official ones, have no minimum spending requirement, and no monthly fees.

claude-opus-4-7

Click to copyclaude-sonnet-4-6

Click to copyclaude-haiku-4-5-20251001

Click to copyclaude-opus-4-6

Click to copyclaude-sonnet-4-5-20250929

Click to copyYourData SecurityIs Non-Negotiable

Zero Data Retention

Requests are forwarded directly to Anthropic — no logging, no caching. We never store your prompts or responses. Period.

Isolated API Keys

Each user gets a dedicated API channel. No cross-contamination, no shared risk.All traffic encrypted end-to-end via TLS.

Multi-Region Load Balancing

Active-active deployment across multiple regions. Automatic failover if any node goes down.99.8% uptime SLA guaranteed.

What OurUsers Say

Real insights and reviews from our users

"When I was calling the Claude API directly, I kept running into latency issues and random timeouts — especially on long context requests, it would just drop mid-stream. Since switching to your API, response times are noticeably faster and I haven't had a single connection failure."

"After switching to your API, even traffic spikes are handled smoothly — their auto-scaling is rock solid. What really impressed me was when we had a config error at 3am, their engineering team responded and helped fix it within 5 minutes. That level of support is rare."

"When I was calling the Claude API directly, I kept running into latency issues and random timeouts — especially on long context requests, it would just drop mid-stream. Since switching to your API, response times are noticeably faster and I haven't had a single connection failure."

"After switching to your API, even traffic spikes are handled smoothly — their auto-scaling is rock solid. What really impressed me was when we had a config error at 3am, their engineering team responded and helped fix it within 5 minutes. That level of support is rare."

"Your API solved all of these headaches for us. Unified billing, rock-solid connectivity, and 24/7 developer support — we finally get to focus on building our product instead of babysitting API infrastructure."

"We ship fast and constantly need to benchmark different Claude models — Haiku vs Sonnet vs Opus — across various use cases. Your APIA makes it dead simple to switch between models on the fly, and the detailed token usage analytics help us keep costs under tight control."

"Your API solved all of these headaches for us. Unified billing, rock-solid connectivity, and 24/7 developer support — we finally get to focus on building our product instead of babysitting API infrastructure."

"We ship fast and constantly need to benchmark different Claude models — Haiku vs Sonnet vs Opus — across various use cases. Your APIA makes it dead simple to switch between models on the fly, and the detailed token usage analytics help us keep costs under tight control."

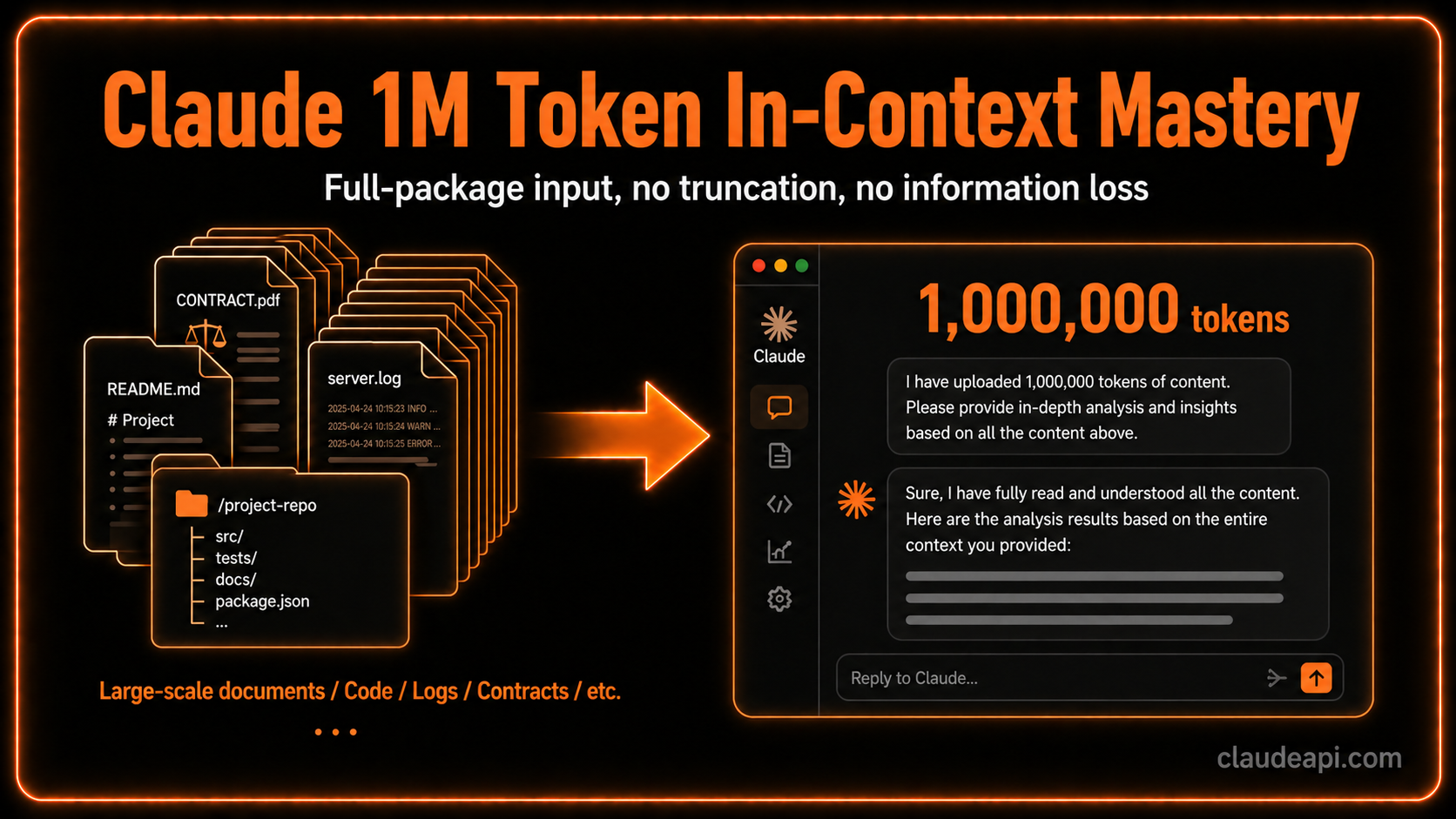

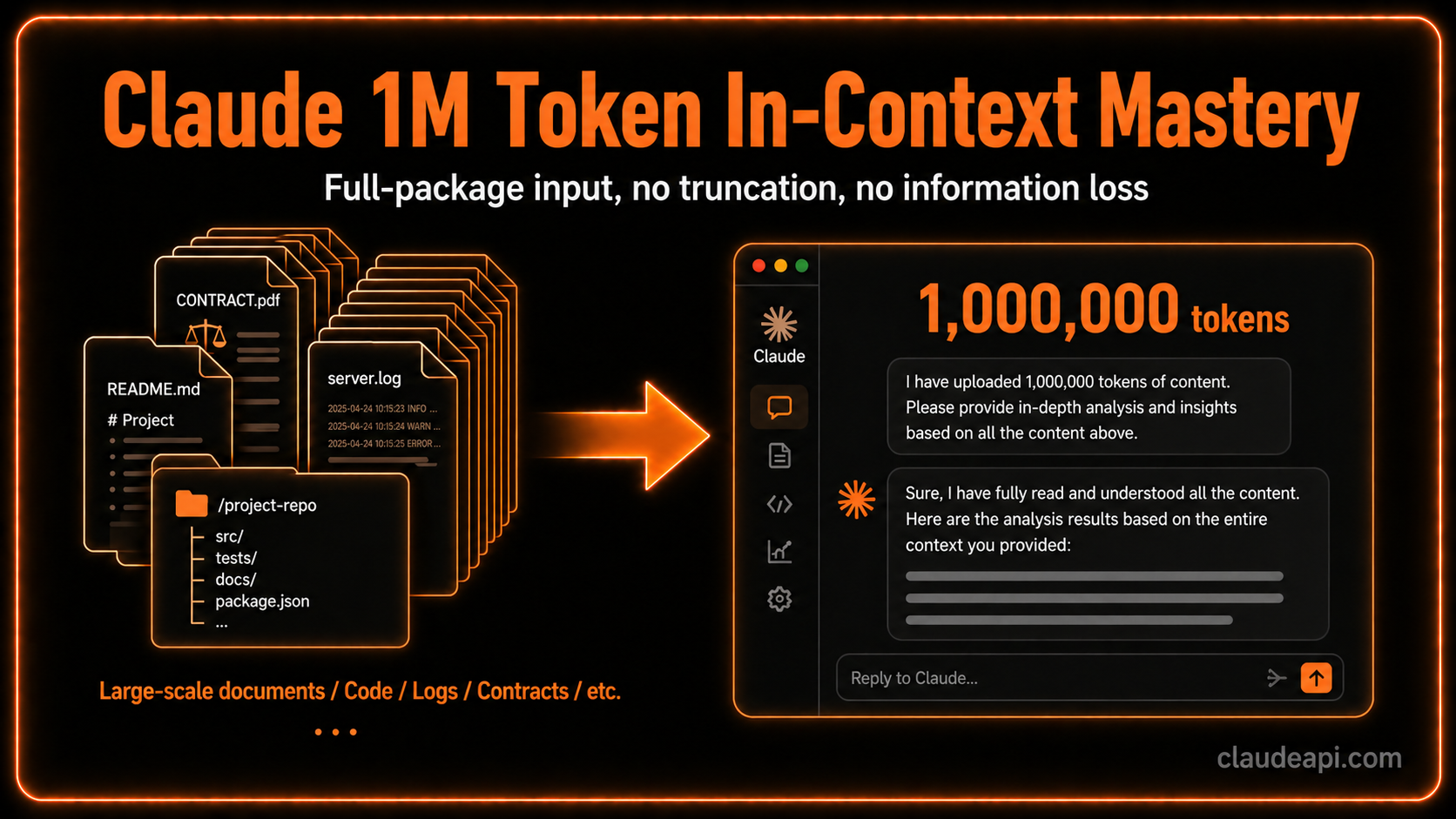

"Long context support is flawless — we've never had a single truncation issue, even with 200K token inputs. Batch processing is incredibly fast too. This has seriously accelerated our research pipeline. Reliable infrastructure for serious academic work."

"Your API has edge nodes across the globe — our users in Southeast Asia, the Middle East, and Europe all get snappy response times. Plus, they support multiple currencies and payment methods, which saved us the hassle of dealing with cross-border billing. Super convenient for distributed teams."

"Long context support is flawless — we've never had a single truncation issue, even with 200K token inputs. Batch processing is incredibly fast too. This has seriously accelerated our research pipeline. Reliable infrastructure for serious academic work."

"Your API has edge nodes across the globe — our users in Southeast Asia, the Middle East, and Europe all get snappy response times. Plus, they support multiple currencies and payment methods, which saved us the hassle of dealing with cross-border billing. Super convenient for distributed teams."

Read our latestarticles

More articles

Claude Enters Legal AI: Anthropic’s MCP Push Is Rebuilding Professional Services Workflows

立即阅读

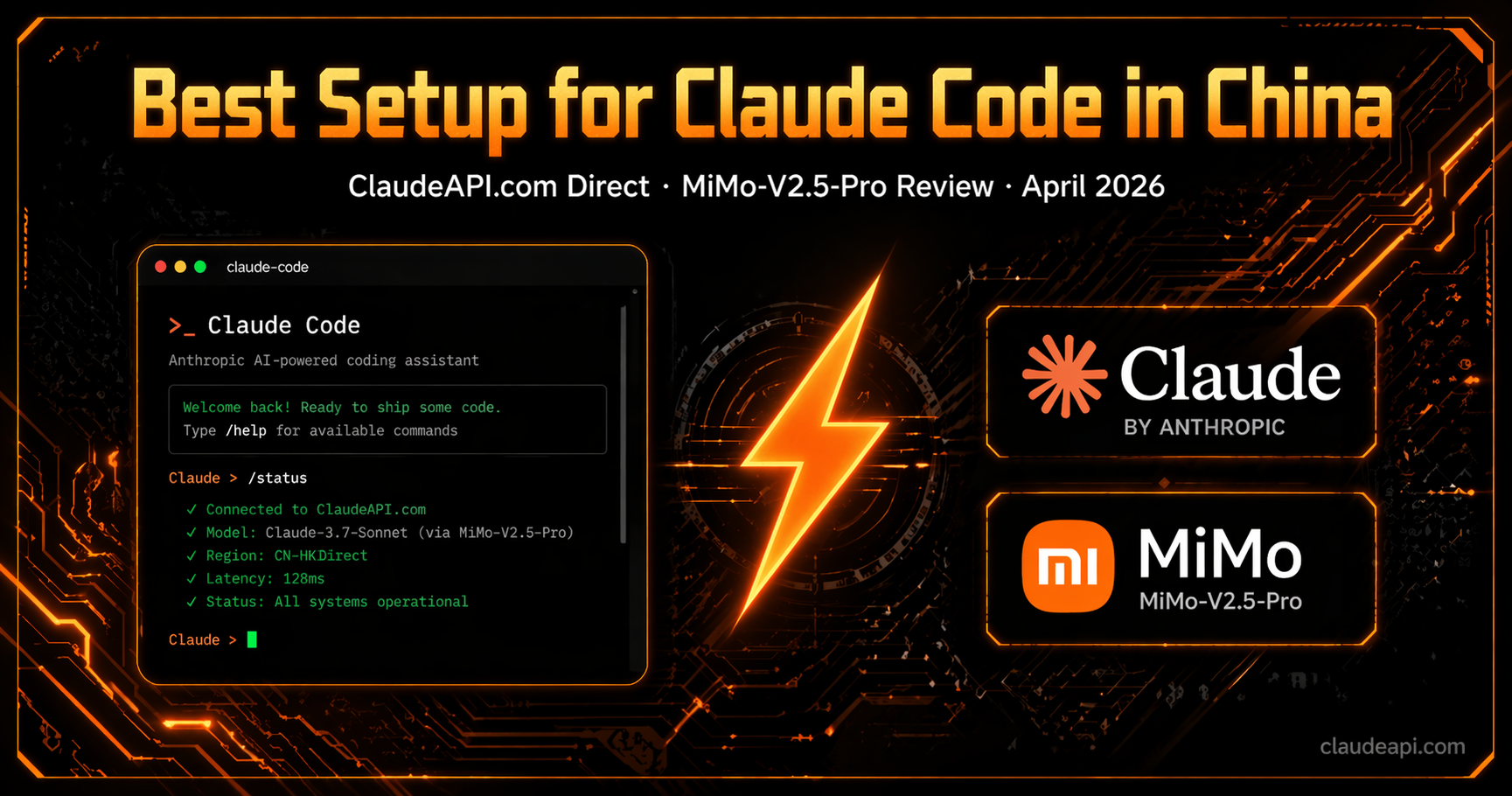

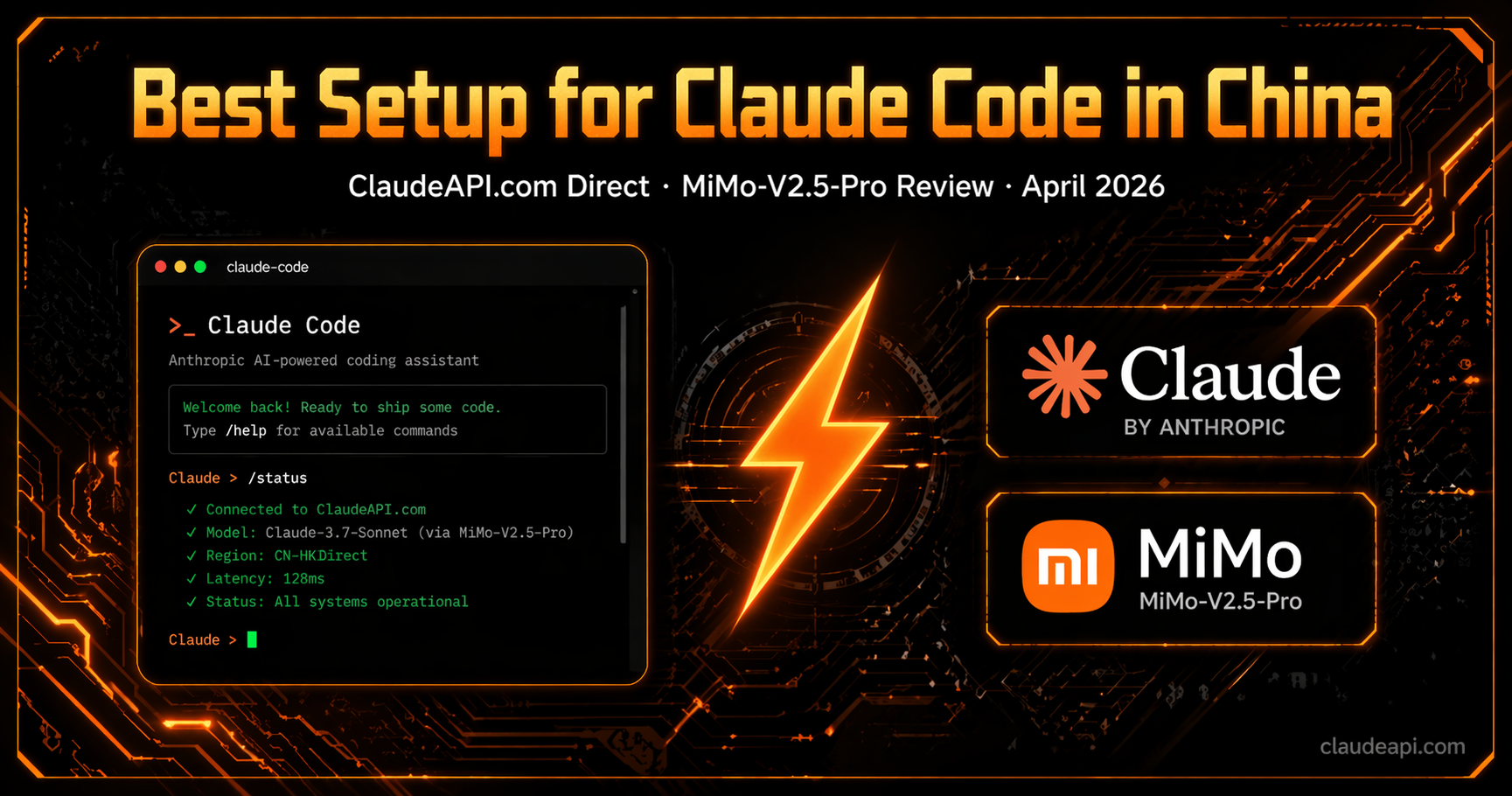

Use Claude Code with Claude API Key in 5 Minutes: A Beginner-Friendly CC Switch Setup Guide

立即阅读

Claude Code and Claude API Limits Just Got a Major Boost: What Anthropic’s SpaceX Compute Deal Means for Developers

立即阅读

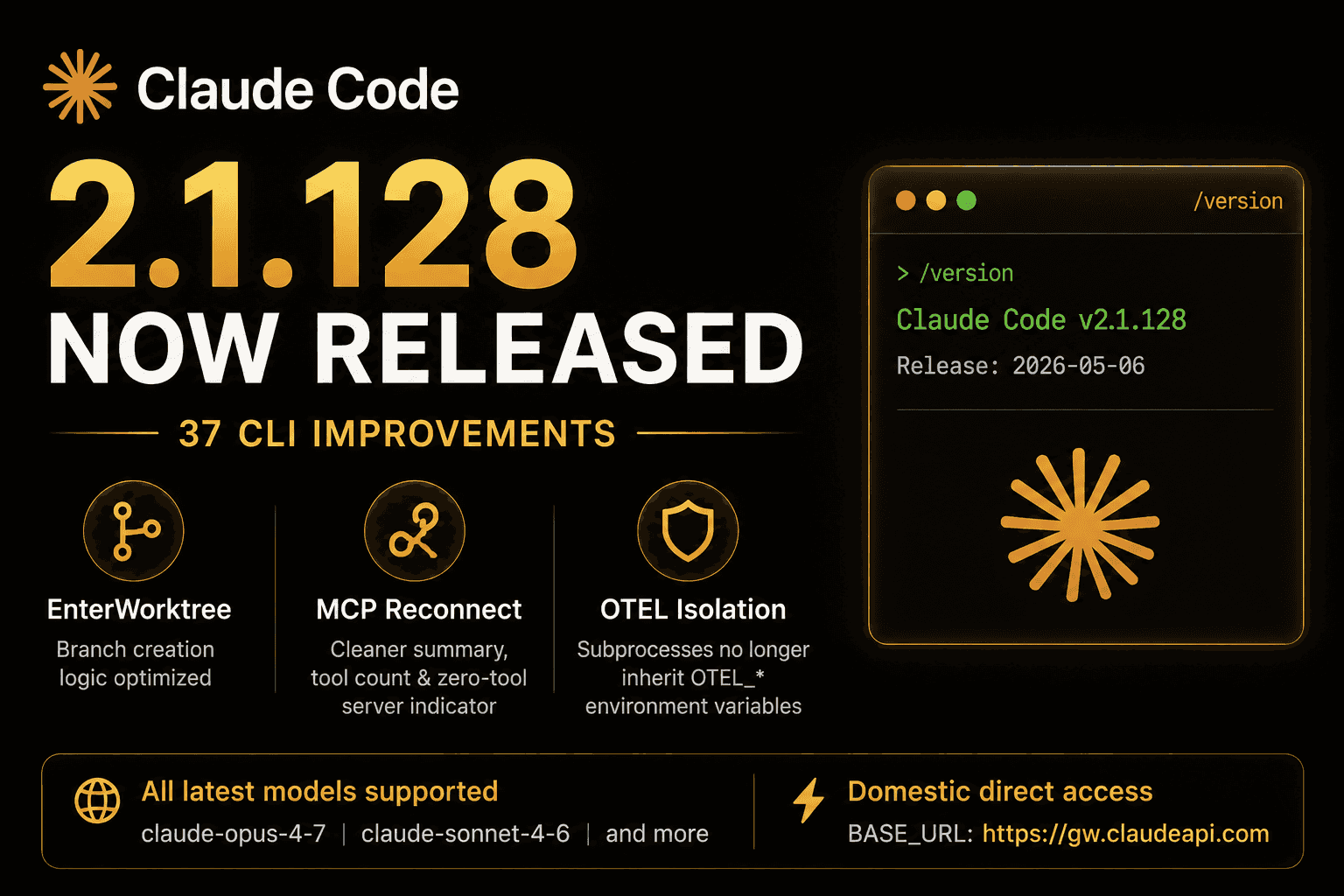

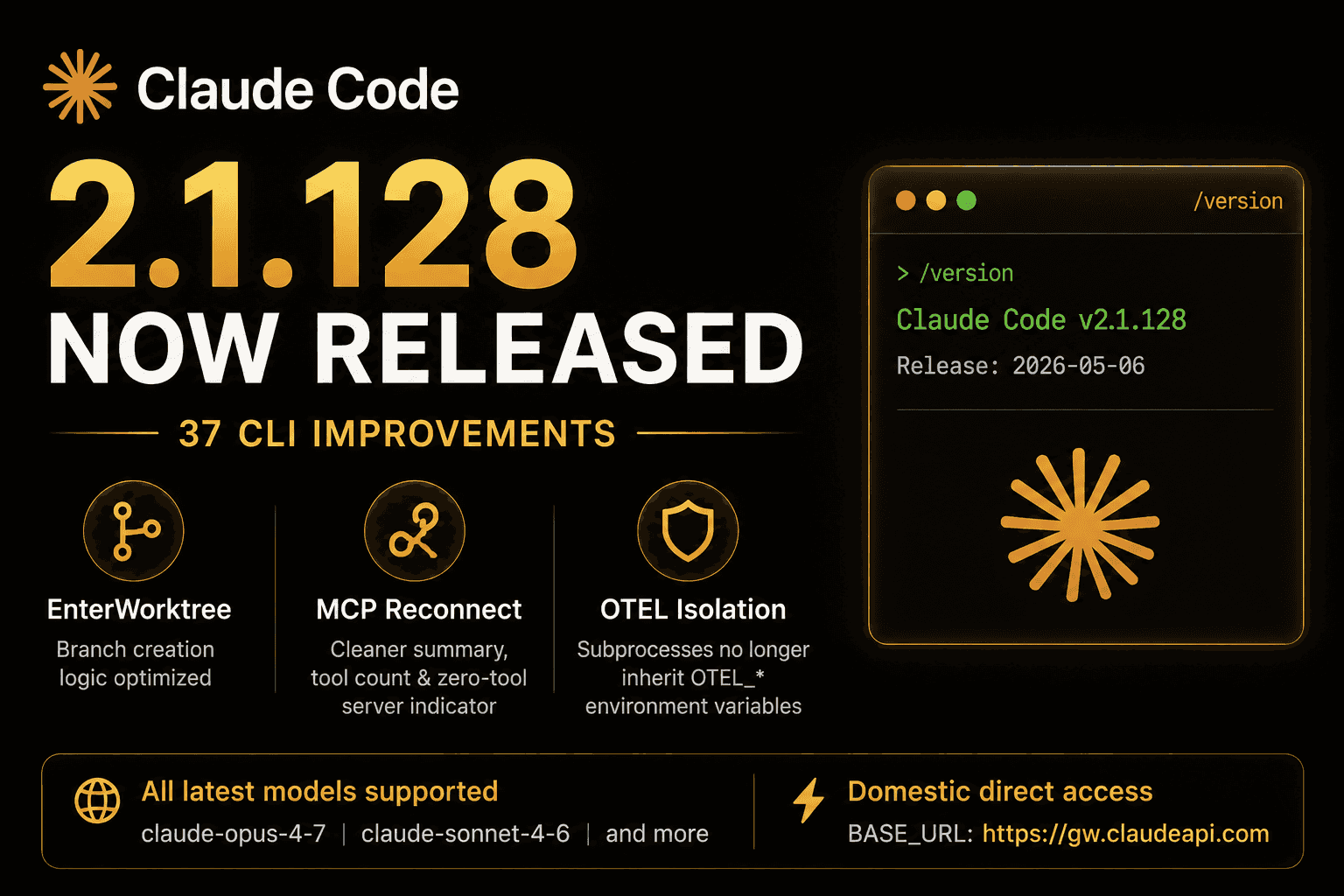

Claude Code 2.1.128 Is Here: 37 CLI Fixes, Better EnterWorktree Behavior, and Cleaner MCP Reconnects

立即阅读

How to Verify If Your Claude API Is Real: A Complete Guide to Model Fingerprint Detection

立即阅读

Google Just Bet $40 Billion on Anthropic: How Claude Code Sparked the Largest AI Funding Frenzy in History

立即阅读

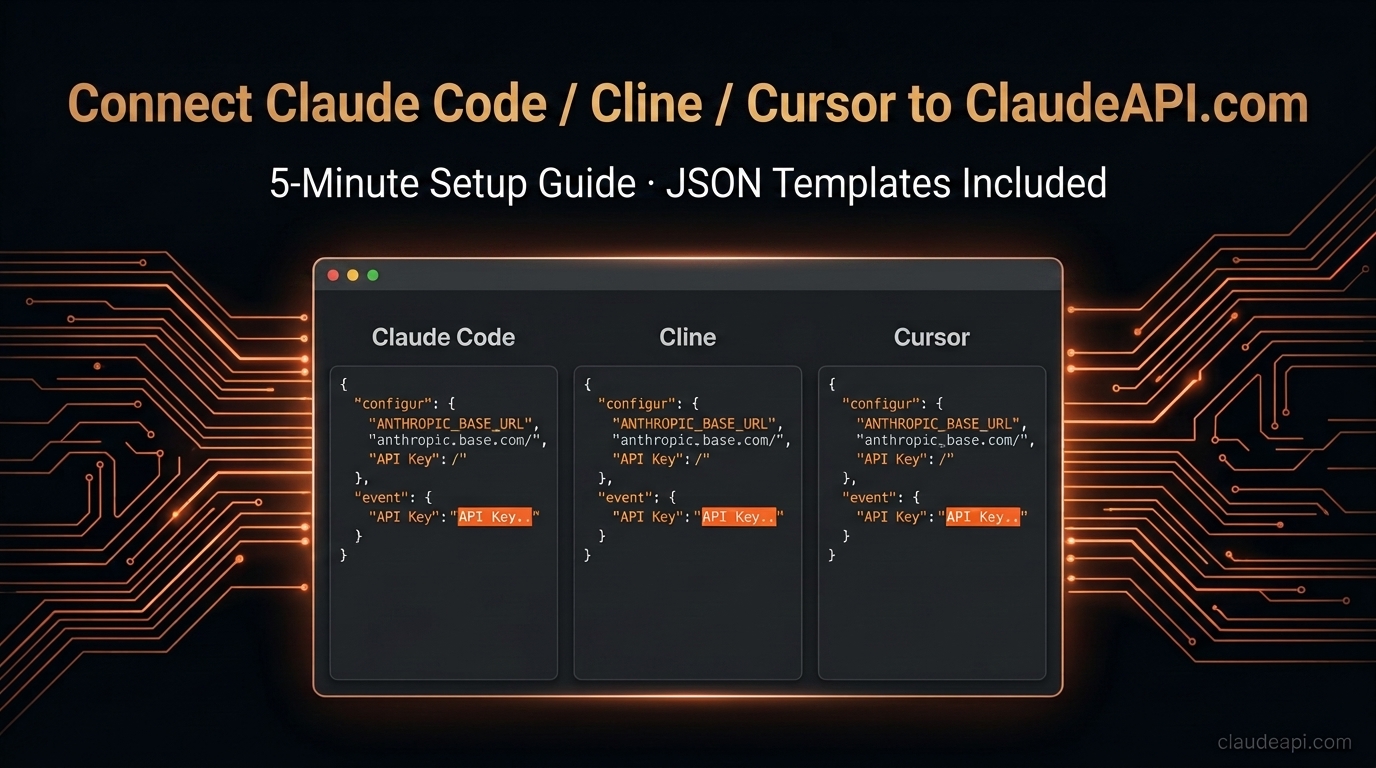

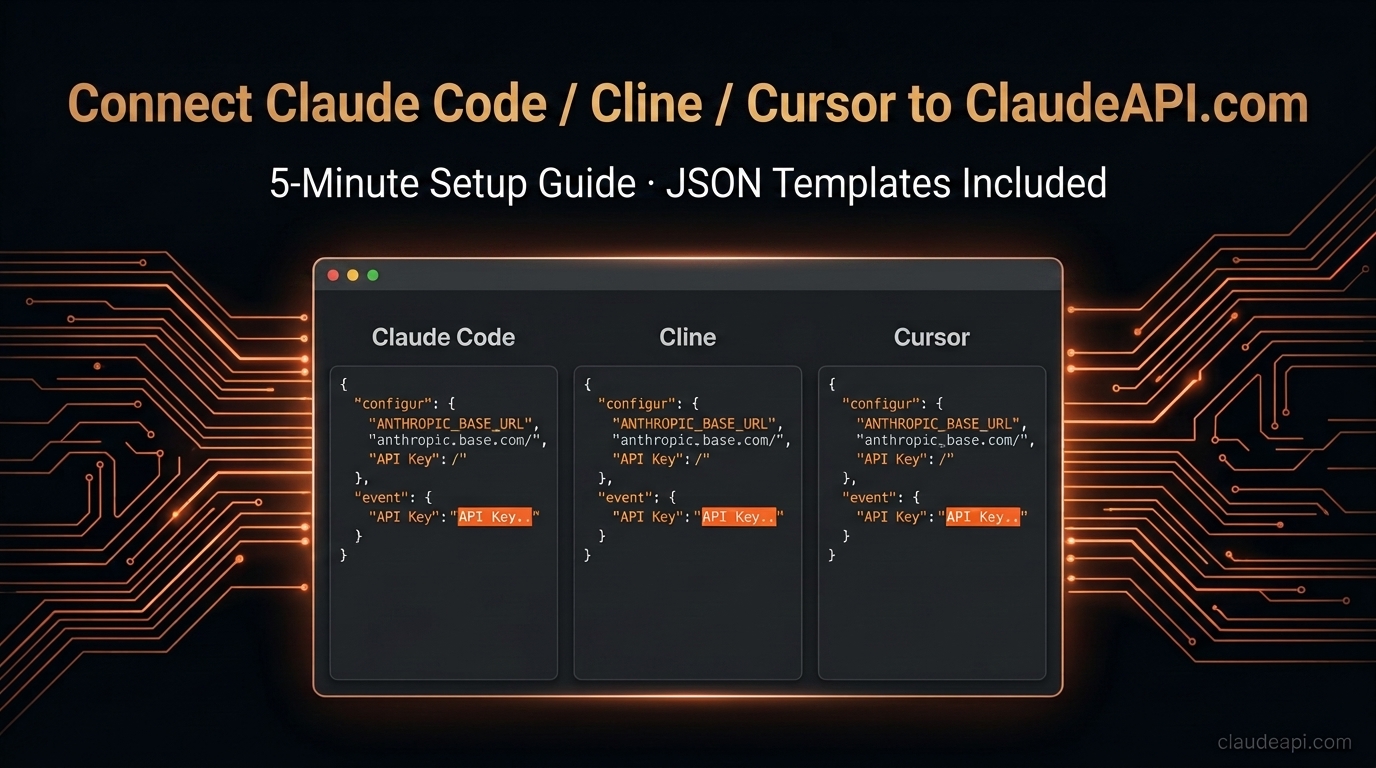

Connect Claude Code / Cline / Cursor to ClaudeAPI.com in (Copy-Paste JSON Templates Included)

立即阅读

Claude API Web Search Tool in Practice: Built-in Internet Search, No More Scraping (2026)

立即阅读

Claude Code's Thinking Depth Dropped 73% — Anthropic Admits It Quietly Dialed Down Default Reasoning, API Users Restored on April 7

立即阅读

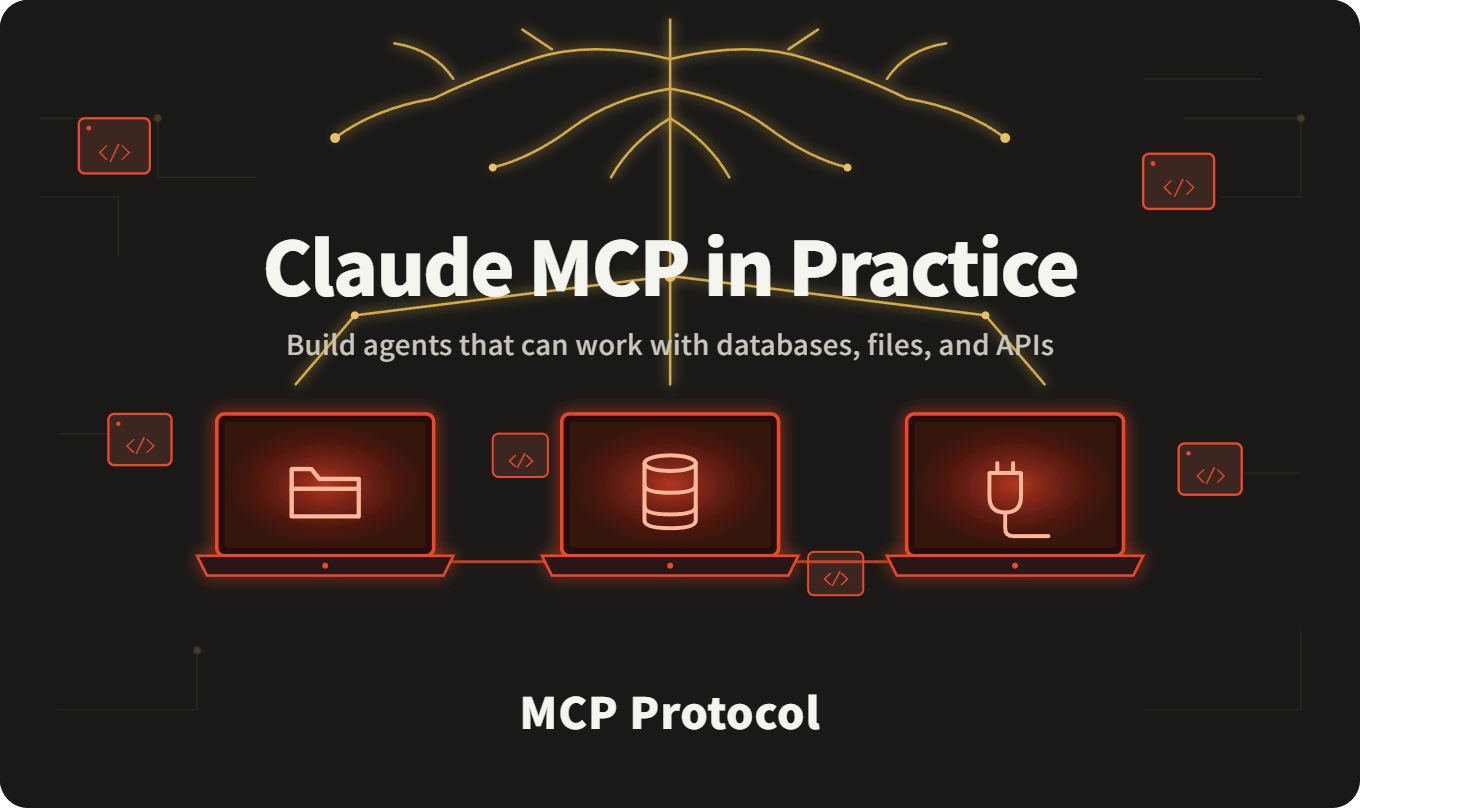

Claude MCP in Practice: Give Your AI Agent Real Control Over Databases, Files, and APIs (Full Code Included)

立即阅读

Why AI Compute Costs Keep Climbing — And What Anthropic's Latest Pricing Changes Mean for Developers

立即阅读

Claude API Pricing in 2026: Complete Cost Breakdown, Token Calculator & Money-Saving Tips

立即阅读

Claude API with Python: The Complete Beginner's Guide — From Setup to Streaming Output

立即阅读

Auto-Generate Weekly Status Reports with Claude API: Drop in Some Keywords, Get a Full Report in 10 Seconds (2026)

立即阅读

Claude Enters Legal AI: Anthropic’s MCP Push Is Rebuilding Professional Services Workflows

立即阅读

Use Claude Code with Claude API Key in 5 Minutes: A Beginner-Friendly CC Switch Setup Guide

立即阅读

Claude Code and Claude API Limits Just Got a Major Boost: What Anthropic’s SpaceX Compute Deal Means for Developers

立即阅读

Claude Code 2.1.128 Is Here: 37 CLI Fixes, Better EnterWorktree Behavior, and Cleaner MCP Reconnects

立即阅读

How to Verify If Your Claude API Is Real: A Complete Guide to Model Fingerprint Detection

立即阅读

Google Just Bet $40 Billion on Anthropic: How Claude Code Sparked the Largest AI Funding Frenzy in History

立即阅读

Connect Claude Code / Cline / Cursor to ClaudeAPI.com in (Copy-Paste JSON Templates Included)

立即阅读

Claude API Web Search Tool in Practice: Built-in Internet Search, No More Scraping (2026)

立即阅读

Claude Code's Thinking Depth Dropped 73% — Anthropic Admits It Quietly Dialed Down Default Reasoning, API Users Restored on April 7

立即阅读

Claude MCP in Practice: Give Your AI Agent Real Control Over Databases, Files, and APIs (Full Code Included)

立即阅读

Why AI Compute Costs Keep Climbing — And What Anthropic's Latest Pricing Changes Mean for Developers

立即阅读

Claude API Pricing in 2026: Complete Cost Breakdown, Token Calculator & Money-Saving Tips

立即阅读

Claude API with Python: The Complete Beginner's Guide — From Setup to Streaming Output

立即阅读

Auto-Generate Weekly Status Reports with Claude API: Drop in Some Keywords, Get a Full Report in 10 Seconds (2026)

立即阅读

3 Steps to integrate Claude. No fluff.

From signup to your first API call in under 5 minutes

Add a Tech Advisor

Scan the QR code and add us on WeChat. Tell us your use case.

Get Your API Key

Your advisor sends your dedicated key within 10 minutes.

Start Calling

Replace base_url and access all models immediately.

Scan to add a tech advisor,

get your API key in 5 minutes

FAQ

You might alsowant to know

We're an independent third-party service provider with access through two official channels: Anthropic's native API and AWS Bedrock. We're not an official Anthropic reseller, but 100% of requests are forwarded directly to official endpoints.

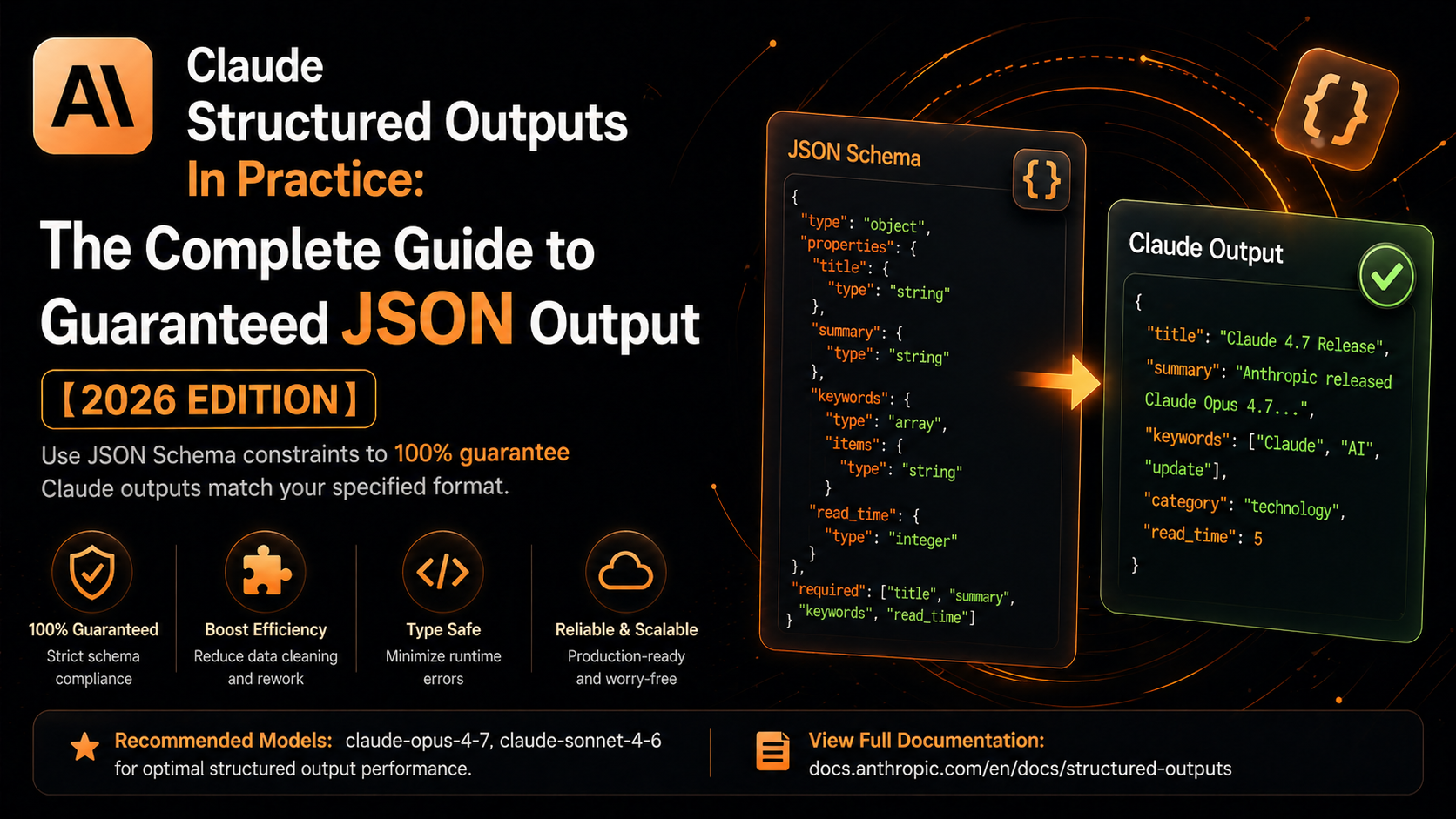

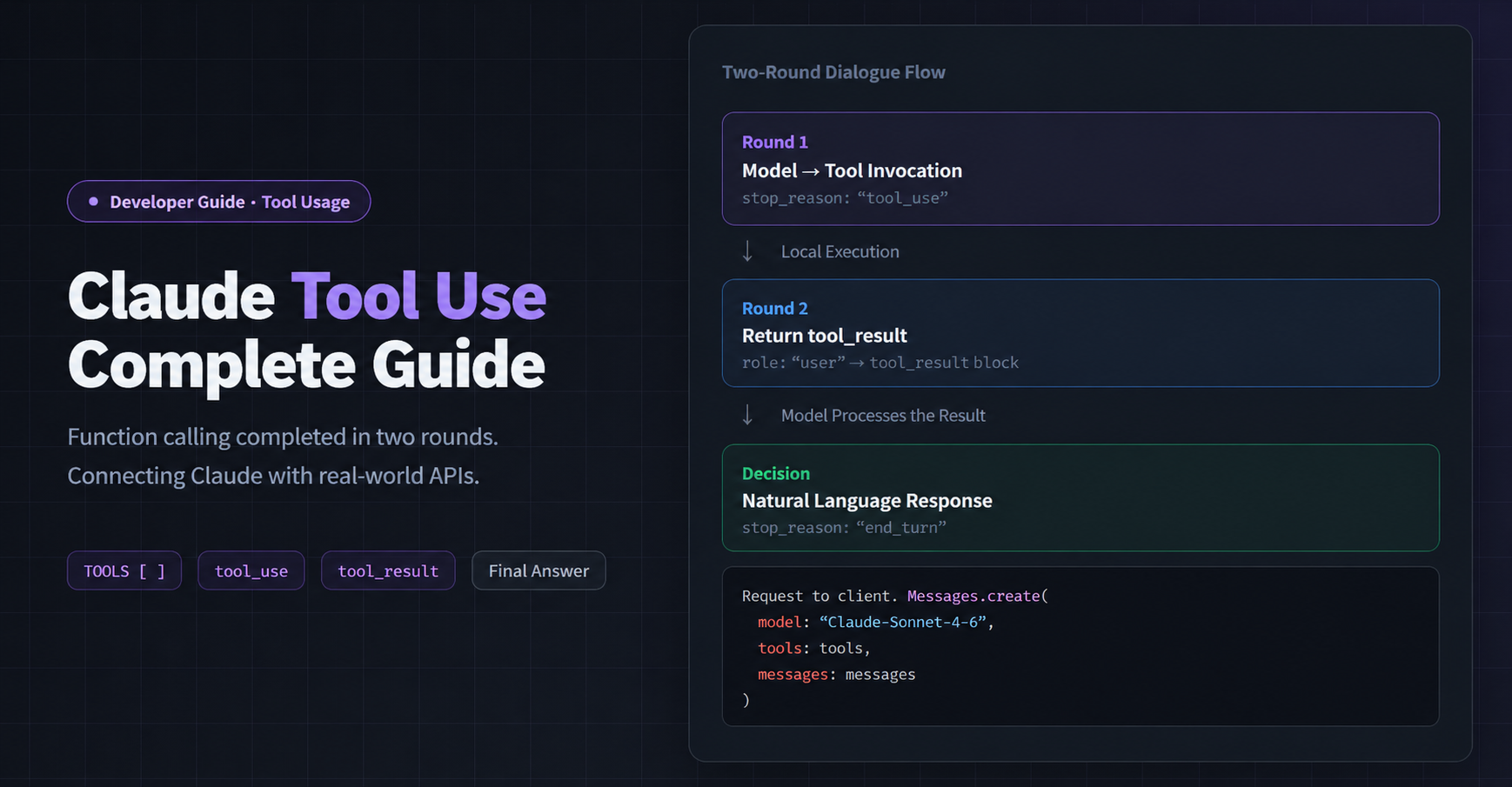

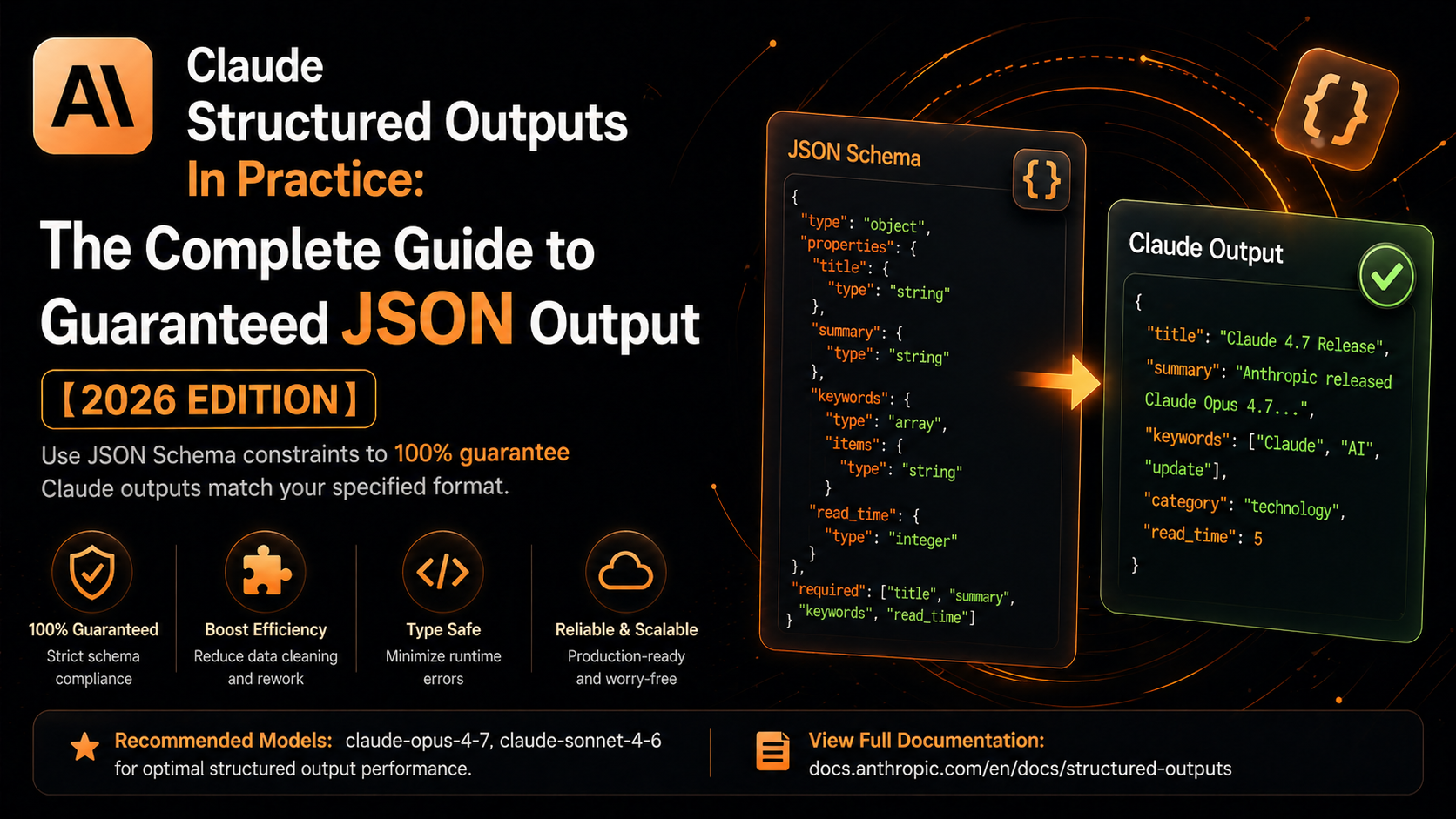

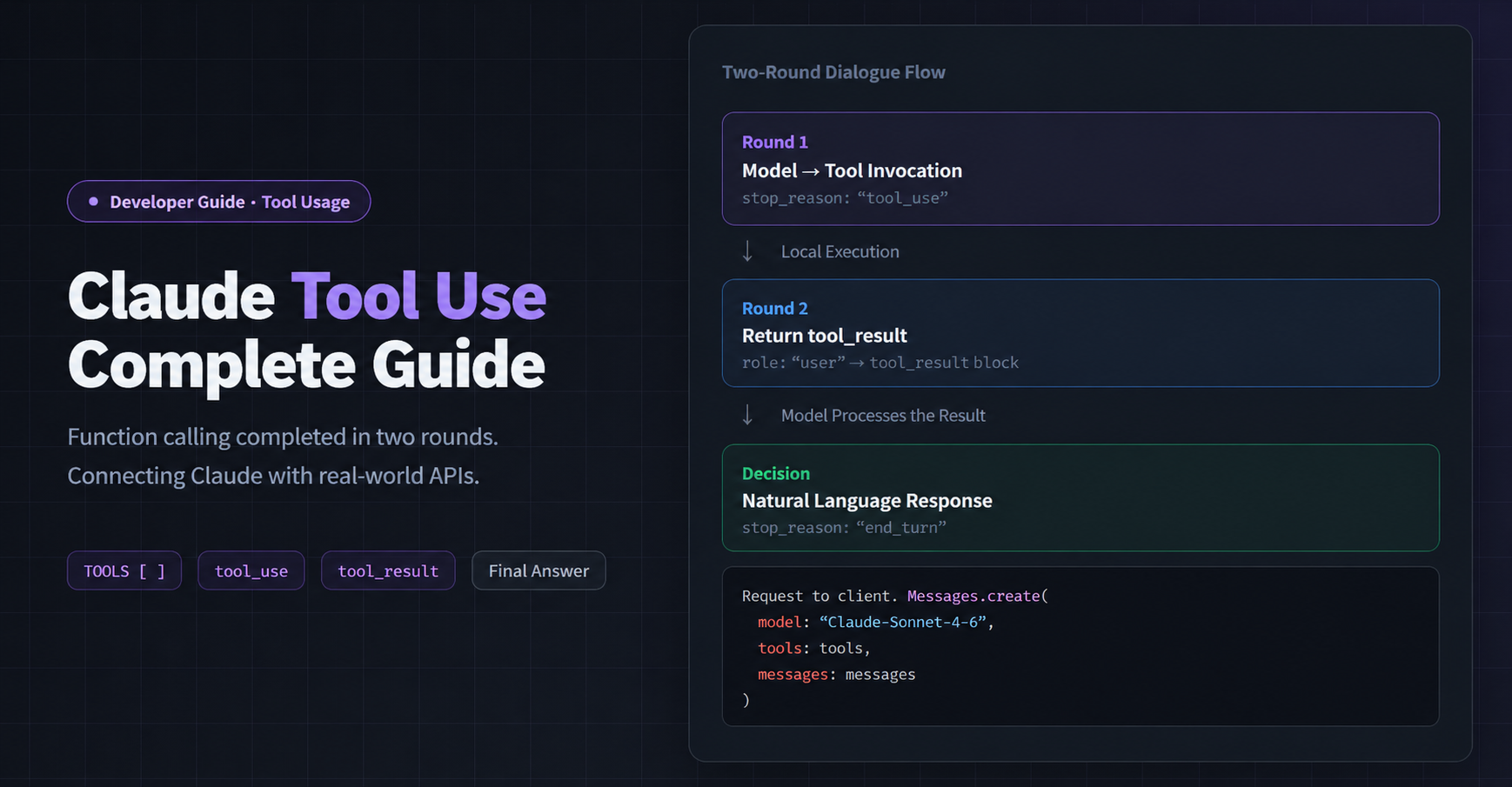

Neither. We use only official API keys and Bedrock's official integration, preserving the full native capabilities of Opus / Sonnet / Haiku — including 1M context and Tool Use. Feel free to benchmark against the official API.

No. All requests are encrypted over HTTPS. We don't persist conversation content on our servers — only token counts and status codes are recorded for billing. Enterprise customers can sign a DPA.

Yes. Both personal and corporate billing names are accepted. We issue standard electronic invoices and VAT invoices — request one from the dashboard in a single click. Enterprise customers can also pay by bank transfer with a formal contract.

Register via the top-right button → create a Key in the dashboard → copy the base_url. You can be up and running in 5 minutes. Everything is self-service; for enterprise contracts or large volumes, contact our sales team.

We're a formally registered technology company with a track record of stable operation. All user funds are held through third-party payment platforms. Our operations are compliant and transparent, and we're committed to serving developers long-term.

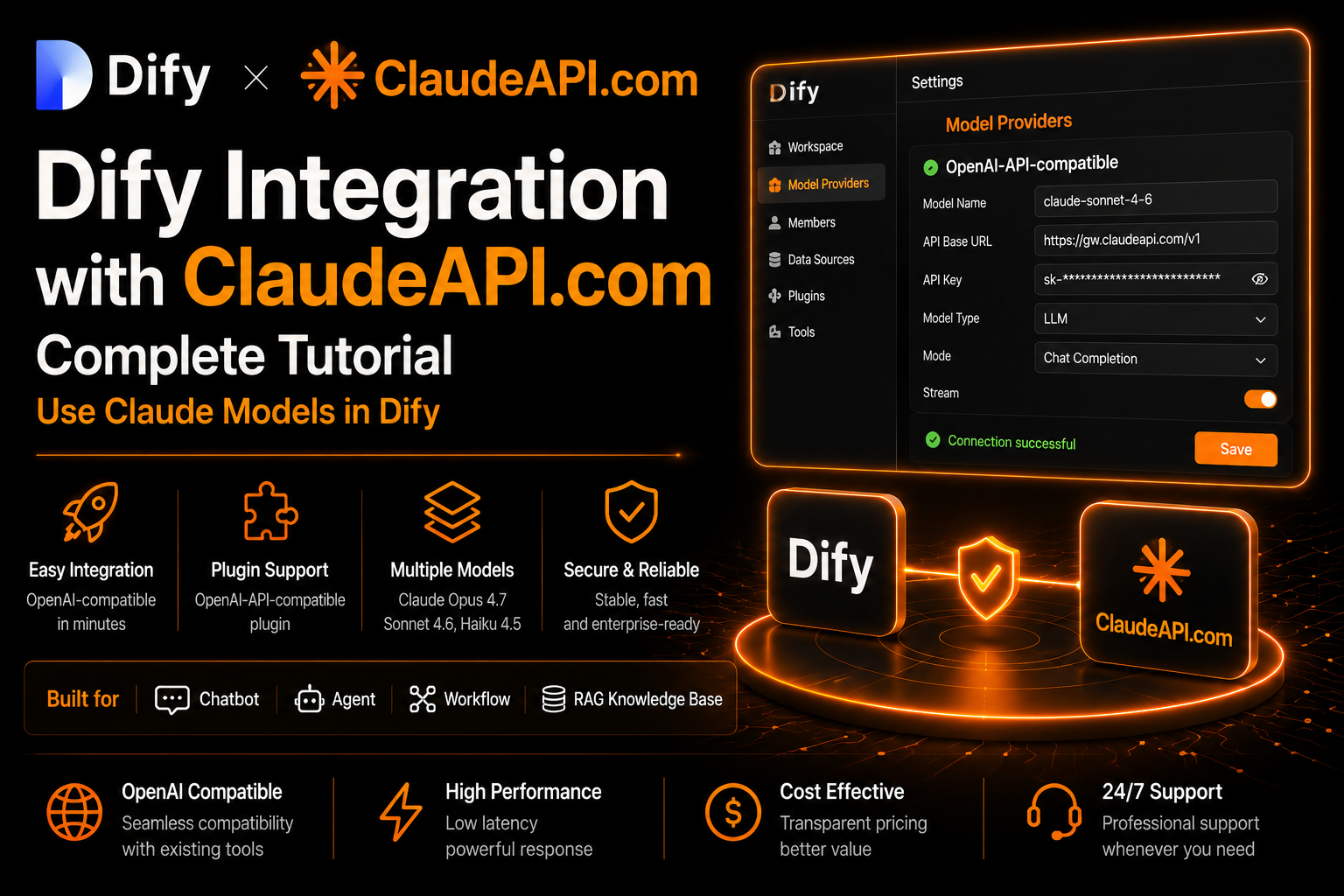

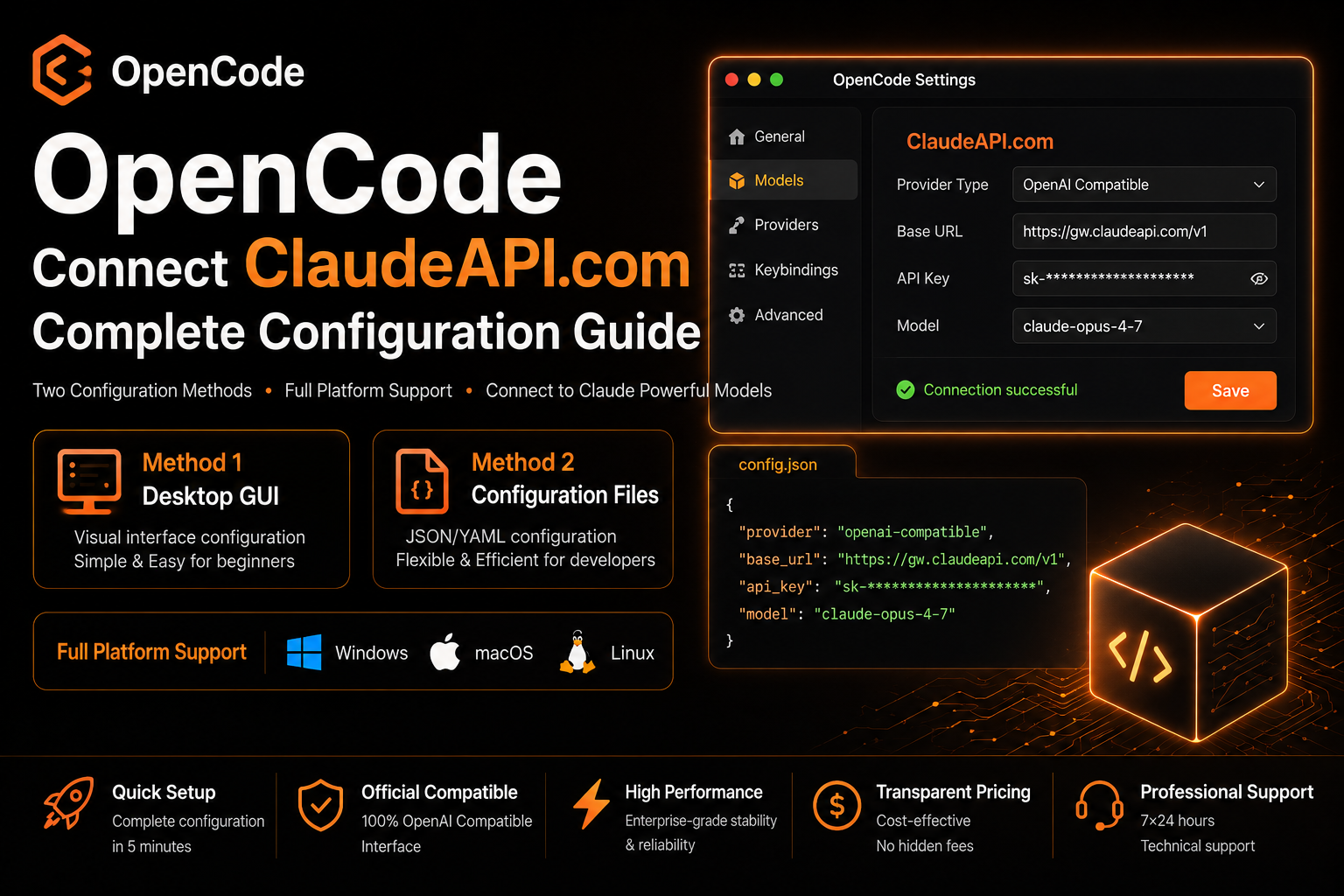

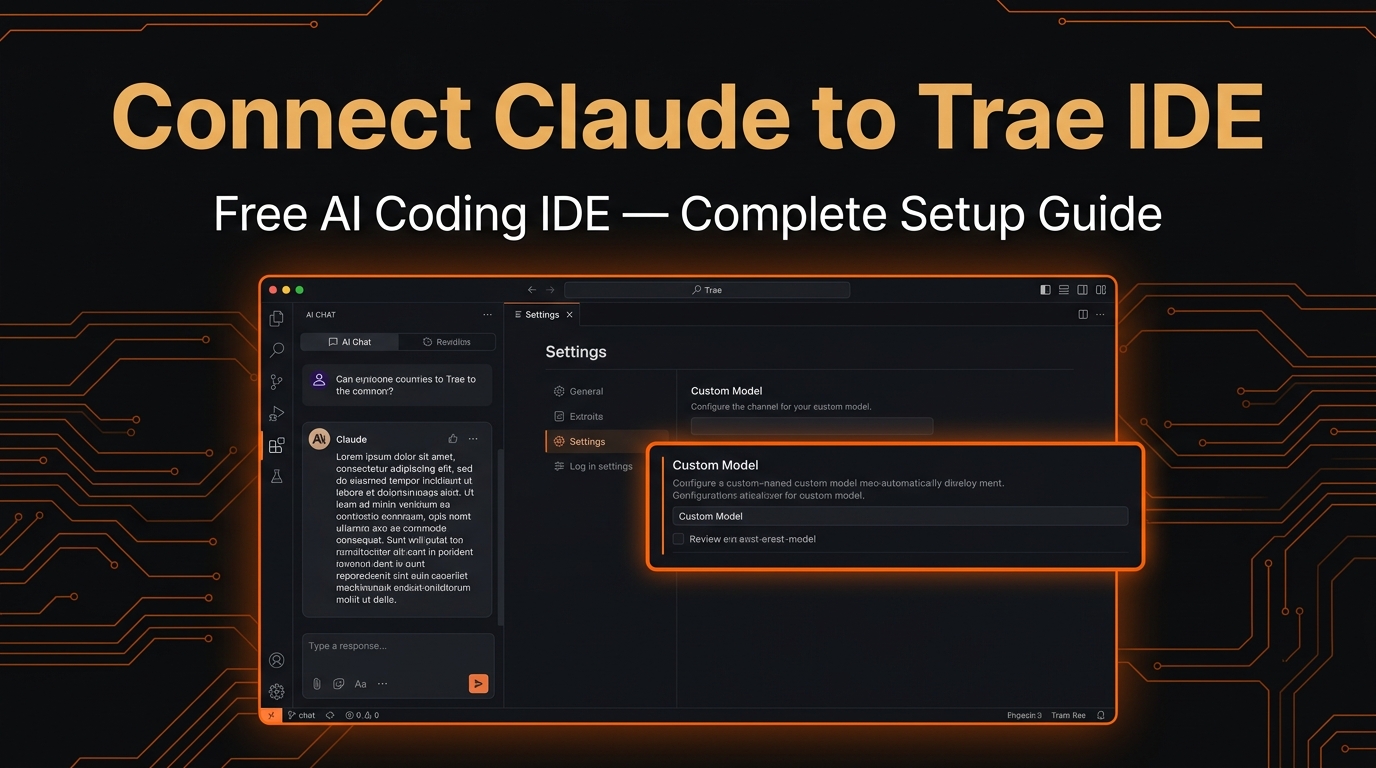

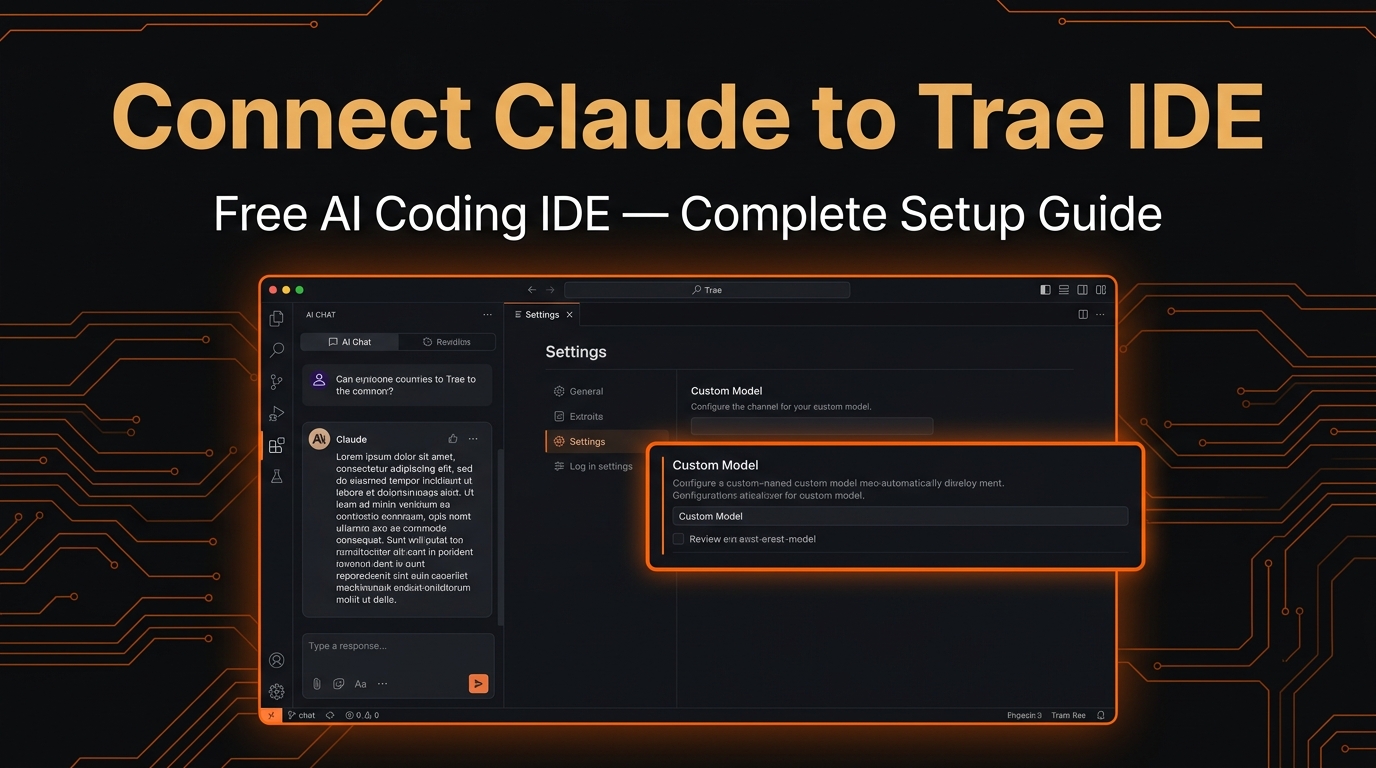

We support the full Claude Opus / Sonnet / Haiku lineup and are compatible with Claude Code, Cursor, Cline, Continue, and other mainstream Agent tools.

Your Claude API — set up in the time it takes to finish a coffee.

Add our tech advisor on Whatsapp and get your dedicated API KEY instantly