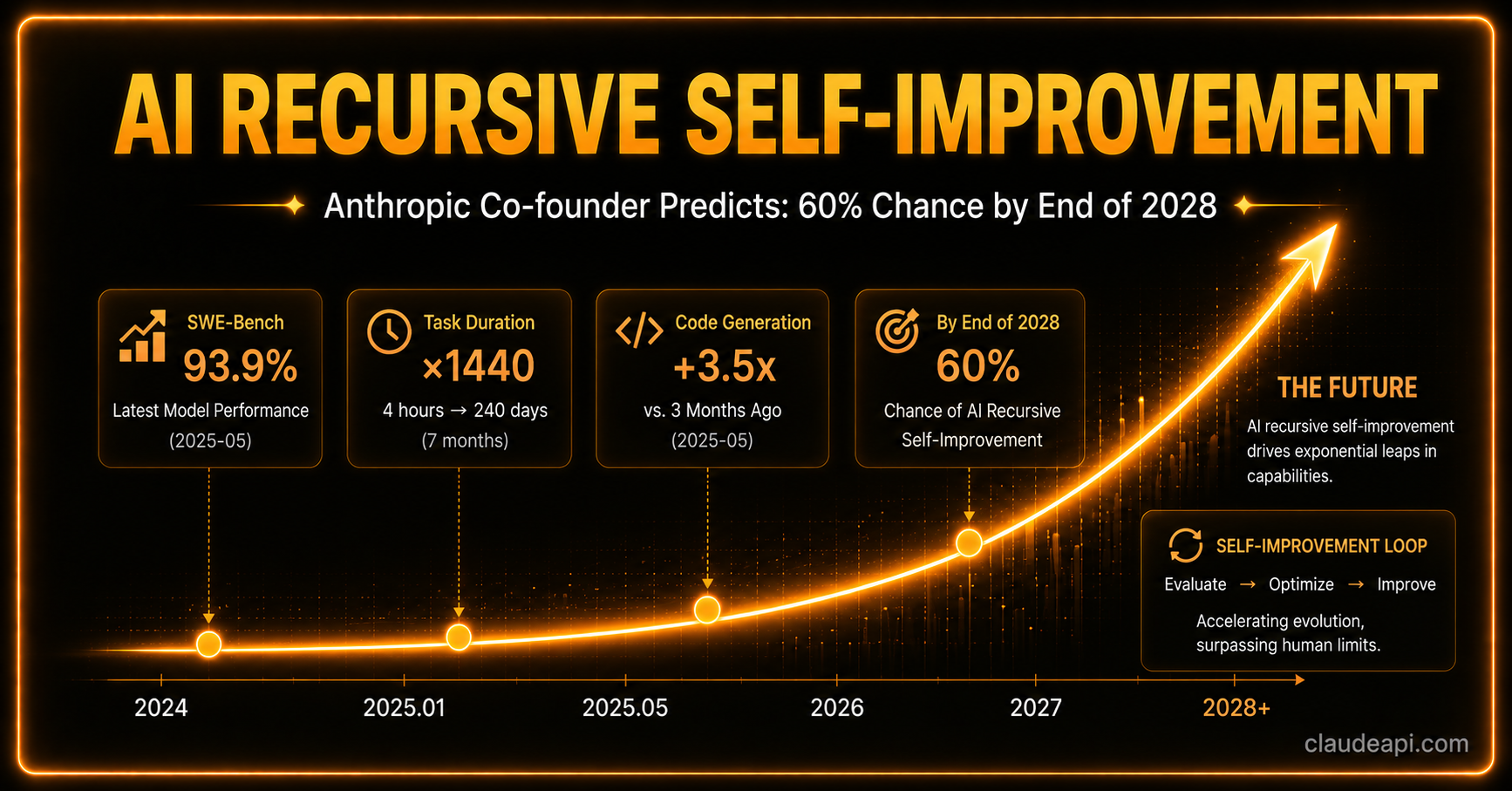

AI Recursive Self-Improvement: Anthropic Co-Founder Predicts 60% Chance by 2028

What would it feel like if AI could autonomously build and improve even more powerful AI — without human engineers in the loop?

Anthropic co-founder Jack Clark has shared his assessment: there’s a 60% probability this happens by the end of 2028.

This isn’t a sci-fi plot device. It’s a conclusion he derived step by step from hundreds of published papers and capability benchmark data.

What Is Recursive Self-Improvement (RSI)?

The core idea behind Recursive Self-Improvement (RSI) is this: an AI system that can autonomously design, train, and improve the next generation of AI systems — forming a self-iterating loop that requires no human intervention.

Clark likens it to crossing the Rubicon — once you’re across, you’ve entered a future that’s nearly impossible to predict with existing frameworks.

The concept sounds abstract, but he grounds it in concrete data.

The Data Speaks: AI Capabilities Are Accelerating

Task Duration: 1,440x Increase in Four Years

METR’s research tracked the duration of tasks AI can complete independently (at a 50% success rate):

- 2022: ~30 seconds

- 2026: ~12 hours

- Projected by end of 2026: potentially exceeding 100 hours

In four years, that number jumped from 30 seconds to 12 hours — a 1,440x increase. This means the complexity of tasks AI can handle autonomously is expanding at a staggering pace.

SWE-Bench: From 2% to 93.9%

SWE-Bench tests AI’s ability to solve real GitHub issues — one of the benchmarks closest to actual production software engineering:

| Time | Claude Model Performance |

|---|---|

| Late 2023 | 2% |

| 2026 | 93.9% |

In under three years, this benchmark has been nearly solved.

CORE-Bench: From 21.5% to 95.5% in 15 Months

CORE-Bench measures AI’s ability to reproduce experimental results from research papers — one of the most time-consuming steps in the scientific workflow:

- 2024: top accuracy 21.5%

- Mid-2025: top accuracy 95.5%

15 months — from barely passing to near-perfect. The benchmark has been officially declared “solved.”

MLE-Bench: AI Competing on Kaggle

MLE-Bench has AI participate in real machine learning competitions (Kaggle), evaluating its practical performance on model optimization tasks:

- October 2024: top score 16.9%

- February 2026: top score 64.4%

AI Optimizing Training Code: 52x Speedup

In Anthropic’s internal tests, AI’s efficiency at optimizing training code for small language models jumped from 2.9x to 52x over baseline in less than a year.

This means AI can already effectively perform engineering optimization on “AI training itself.”

The Core Logic: 99% of the Engineering Is About to Be Automated

Clark borrows Edison’s famous quote to divide AI research into two parts:

- 1% inspiration: Truly groundbreaking ideas, like the invention of the Transformer architecture

- 99% perspiration: Data cleaning, running experiments, hyperparameter tuning, reproducing papers…

His assessment: AI is rapidly taking over that 99% of engineering work.

Several concrete signs support this:

- AI acting as project manager: Existing systems can already orchestrate multiple AI sub-tasks like a PM — assigning work and aggregating results.

- PostTrainBench performance: On tasks involving fine-tuning open-source models to boost performance, AI can already achieve roughly half the effectiveness of human researchers.

- Anthropic’s internal proof of concept: In experiments on “automated alignment research,” AI-proposed approaches actually exceeded the human researcher baseline.

Once AI also achieves breakthroughs in that 1% of “inspiration,” the complete “research → improvement → stronger AI” loop will be in place.

Why 60%, and Not Higher?

Clark’s prediction is not unconditionally optimistic. He breaks the probability into two stages:

- End of 2027: 30% probability

- End of 2028: 60% probability

He acknowledges that AI still has a systematic gap in breakthrough research requiring “creative intuition” — the kind of ability to propose genuinely new paradigms that current models don’t yet possess.

The 30% for 2027 reflects a scenario where “engineering automation is largely complete but the creativity gap remains open.” The 60% for 2028 is based on his judgment that there’s a considerable probability this capability gap gets closed before then.

The Skeptics Deserve a Fair Hearing

To be fair, the article also outlines several counterarguments:

Diminishing marginal returns: AI self-improvement may not yield exponential growth — it could hit diminishing returns, making breakthroughs increasingly difficult in certain dimensions.

Definitional ambiguity: There is no authoritative, unified definition of “recursive self-improvement” in the field. Different people may have very different standards for what “achieving RSI” actually means.

Capability gaps: As Clark himself admits, current AI still has clear shortcomings in pioneering research.

These objections aren’t dismissals — they’re reminders that predicting inflection points like this comes with inherent, enormous uncertainty.

The More Urgent Problem: The Governance Window Is Closing

The technical debate can continue, but what concerns Clark more is something else entirely: we’re running out of time.

He warns:

- If RSI occurs, current AI alignment techniques will degrade sharply after multiple generations of iteration

- Society, the research community, and policymakers are far from adequately prepared

- OpenAI, Anthropic, and new companies focused on this direction (such as Recursive Superintelligence) are pushing forward at full speed

The entire industry has its foot on the gas, and the brakes haven’t been built yet.

What Does This Mean for Developers?

If even half of Clark’s assessment is correct, the AI capability leap over the next few years will be the fastest we’ve ever seen.

For developers currently building products with the Claude API, this means:

- The AI workflows you build today may be automatically optimized by AI tomorrow — embedding automation capabilities into your product architecture is a direction worth serious consideration.

- Model capability boundaries are shifting rapidly — regularly reassessing your task allocation (what goes to AI, what stays with humans) is an essential habit.

- Complex multi-agent collaboration is becoming viable — orchestrating AI to complete end-to-end research or engineering tasks is no longer just a lab concept.

Takeaway

Jack Clark’s prediction is neither doomsday prophecy nor blind optimism — it’s a data-driven technical assessment with clearly stated uncertainty ranges.

From SWE-Bench’s 2% to 93.9%, from 30-second tasks to 12-hour tasks, from manually running experiments to AI automatically optimizing training code… these numbers aren’t predicting the future. They’re describing what has already happened.

2028 is two and a half years away. Whether or not RSI arrives on schedule, the wave of AI R&D automation is already underway.

If you’re evaluating how to integrate the Claude API into your product or workflow, ClaudeAPI.com offers a fully compatible API access service supporting the latest models including claude-opus-4-7 and claude-sonnet-4-6. Direct access from anywhere, no extra configuration needed.