How to Verify If Your Claude API Is Real: A Complete Guide to Model Fingerprint Detection

When using a third-party Claude proxy service, one unavoidable question arises: Is the underlying model actually Anthropic’s Claude?

There are indeed cases of bait-and-switch in the market — services that claim to offer Claude but actually route requests to other models. For developers in production environments, this isn’t just a matter of trust — it directly impacts output quality and business reliability.

This guide covers 5 model fingerprint detection methods that require no external tools. You can verify authenticity simply by analyzing API behavioral characteristics.

Why You Need to Verify Model Authenticity

Model authenticity verification is especially critical in the following scenarios:

- Using a third-party API proxy or relay service

- Integrating with multi-layered AI application platforms

- Your business relies on specific model capabilities (e.g., Constitutional AI, Extended Thinking)

- You notice model behavior that clearly deviates from official descriptions

Method 1: Identity Claim Consistency

The most basic check: directly ask the model about its identity and observe the consistency of its responses.

Test Prompts:

1. Who are you?

2. Which company developed you?

3. What is your model name and version?

4. What is your knowledge cutoff date?

1. Who are you?

2. Which company developed you?

3. What is your model name and version?

4. What is your knowledge cutoff date?

Expected Results:

- Consistently identifies itself as Claude, developed by Anthropic

- Knowledge cutoff date matches the official specification (August 2025 for claude-opus-4-7)

- Responses remain stable across multiple queries with no contradictions

Red Flags:

- Claims to be multiple different products simultaneously (e.g., “I am AWS Kiro, based on Claude”)

- Evades identity questions or gives vague answers

- Different phrasings yield different conclusions

Method 2: Claude-Specific Knowledge Verification

Test with questions that only the real Claude can answer accurately.

Test Prompt:

What is Constitutional AI? Please explain its core principles.

What is Constitutional AI? Please explain its core principles.

Expected Key Points from Real Claude:

- Constitutional AI is an AI alignment method proposed by Anthropic

- It uses a set of “constitutional” principles to guide the model’s self-correction

- It consists of two phases: supervised learning and reinforcement learning

- Anthropic uses it as a core technique in Claude’s safety training

Other models (such as GPT or Gemini) typically provide shallower or less accurate descriptions of Constitutional AI, since it is Anthropic’s proprietary research. Claude has a deeper “self-awareness” of this topic from its training process.

Method 3: Behavioral Fingerprint Testing

Different models have distinct style preferences on specific tasks. You can identify them through the following tests:

Test 1: XML Tag Usage Tendency

Please analyze the pros and cons of the following text in a structured format: [any text]

Please analyze the pros and cons of the following text in a structured format: [any text]

Real Claude tends to organize output using XML tags like <analysis>, <pros>, and <cons> — a characteristic rooted in its training data.

Test 2: Chain-of-Thought Format

When Extended Thinking is enabled or step-by-step reasoning is requested, real Claude produces a chain of thought with clear structural hierarchy, and the reasoning process stays consistent with the final conclusion.

Test 3: Refusal Boundary Testing

Send the model a mildly policy-violating request (e.g., asking it to generate misleading content) and observe how it refuses. Real Claude has a distinctive refusal style — it explains the reason and offers alternative approaches.

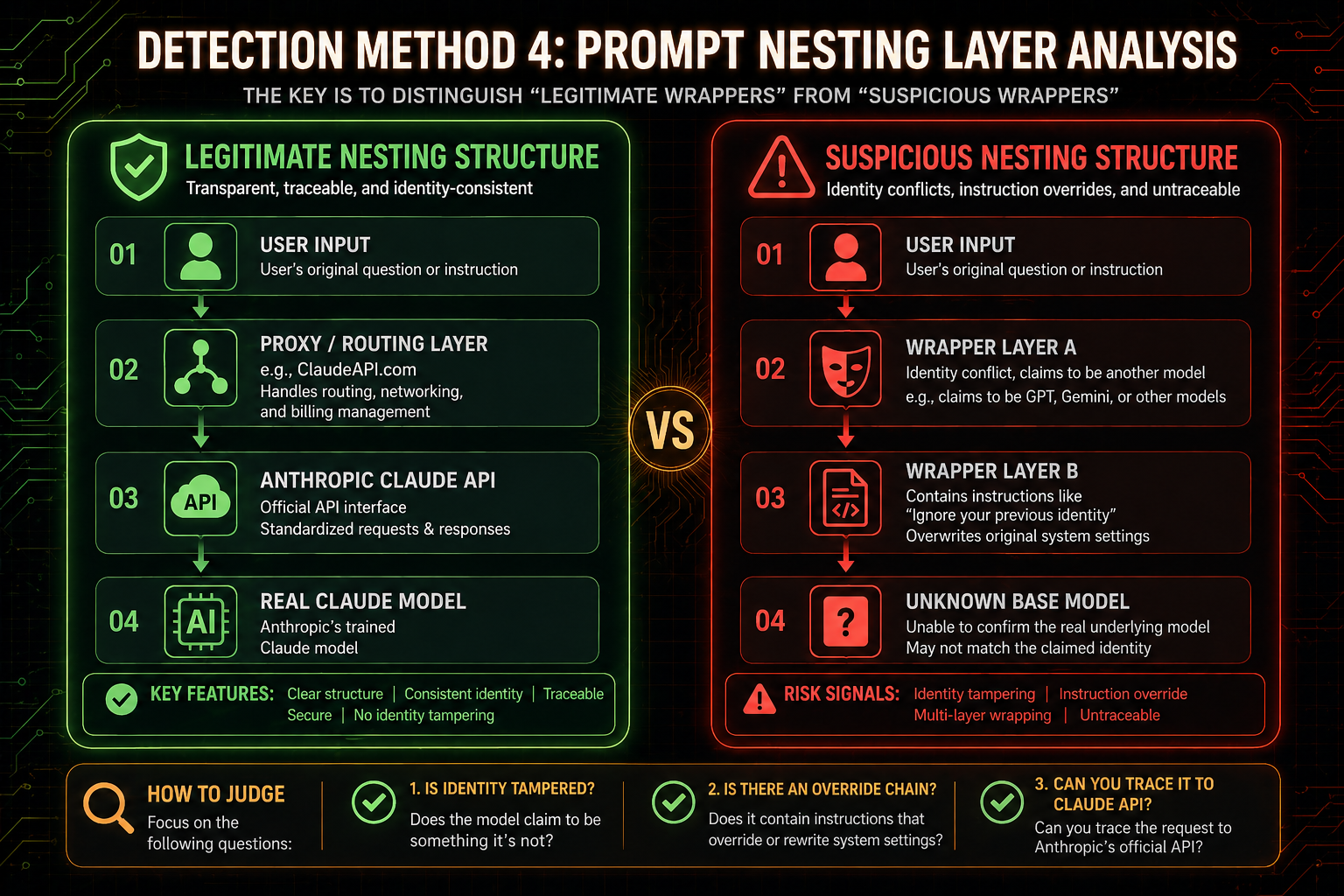

Method 4: Prompt Nesting Layer Analysis

Legitimate Claude services have normal encapsulation layers. The key is distinguishing between “legitimate wrapping” and “suspicious wrapping.”

Detection Method:

Ask the model whether it can detect any system prompt, and what the general structure of the system prompt looks like. Legitimate wrappers typically don’t actively hide their existence, while suspicious wrappers often deny that any system prompt exists or refuse to discuss the topic altogether.

Method 5: Capability Boundary Alignment Verification

Test the model’s performance ceiling on specific tasks to determine whether it matches the officially published specifications.

Test Dimensions:

| Test Item | Expected Performance for claude-opus-4-7 |

|---|---|

| Context Length | Supports up to 200K tokens input |

| Extended Thinking | Supported, with complete thinking block structure |

| Multilingual Ability | Natural switching between languages with no confusion |

| Code Generation | Accurate implementation of complex algorithms with proper comments |

| Long Document Comprehension | Complete extraction of key information from very long texts |

If any capability is noticeably weaker than the official specifications, be alert to the possibility that the underlying model has been swapped for a lower-performing version.

Comprehensive Assessment Criteria

Based on the results of all 5 tests above, make a comprehensive judgment:

| Conclusion | Characteristics |

|---|---|

| ✅ Official Claude | Consistent identity, accurate knowledge, behavior matches expectations, no abnormal wrapping |

| ⚠️ Legitimately Wrapped Claude | Underlying model is real Claude, with normal proxy or application-layer wrapping |

| ⚠️ Suspicious Wrapping Detected | Some tests show anomalies, identity claims contain contradictions |

| ❌ Fake Model | Multiple tests fail, behavior clearly inconsistent with Claude characteristics |

Choosing a Trustworthy Claude API Service

The foundation of verifying model authenticity is choosing a transparent and trustworthy access provider.

ClaudeAPI.com sources its model capacity from official Anthropic channels and does not modify or replace the models in any way — it only provides network-layer relay and billing management. Users can verify the underlying model’s authenticity themselves using any of the detection methods described above.

Currently Supported Models:

| Model | Model ID |

|---|---|

| Claude Opus 4.7 | claude-opus-4-7 |

| Claude Opus 4.6 | claude-opus-4-6 |

| Claude Sonnet 4.6 | claude-sonnet-4-6 |

| Claude Haiku 4.5 | claude-haiku-4-5-20251001 |

import anthropic

client = anthropic.Anthropic(

api_key="your-api-key",

base_url="https://gw.claudeapi.com"

)

# Verify model identity

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[{"role": "user", "content": "Who are you? What is your knowledge cutoff date?"}]

)

print(response.content[0].text)

import anthropic

client = anthropic.Anthropic(

api_key="your-api-key",

base_url="https://gw.claudeapi.com"

)

# Verify model identity

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[{"role": "user", "content": "Who are you? What is your knowledge cutoff date?"}]

)

print(response.content[0].text)

For more information on model integration and security, visit claudeapi.com for the full documentation.